Imagine spending hours writing a complex function, only for it to crash the moment you run it because of one tiny logical slip. For humans, a quick test run solves this. For AI, the challenge is much bigger: how do we actually know if a Large Language Model (LLM) can code, or if it's just really good at guessing what the next character should look like? This is where HumanEval is a benchmark dataset developed by OpenAI to assess the code generation capabilities of LLMs through functional correctness. Instead of checking if the AI's code "looks" right, it actually runs the code to see if it works.

Why Text Similarity Isn't Enough for Code

In the early days of AI evaluation, researchers used metrics like BLEU or ROUGE. These are great for translating a sentence from English to French because they check if the output words match the reference words. But code is different. A single misplaced comma or a changed variable name might make the code look 99% similar to the correct answer, yet it will fail completely when executed. In fact, some studies show that text similarity correlates poorly with whether the code actually works, often showing a correlation as low as 0.12 across various models.

The breakthrough with HumanEval was the shift to execution-based evaluation. Every problem in the set comes with a function signature, a docstring explaining the task, and a suite of unit tests. To get a point, the model's code must pass every single test. This mirrors exactly how a developer works: you write the code, run the tests, and if it fails, you fix it. If the AI can't pass the tests, it hasn't solved the problem, no matter how "clean" the code looks.

The Mechanics of the pass@k Metric

If you look at any AI leaderboard today, you'll see a metric called pass@k. But what does that actually mean? In simple terms, it's the probability that at least one of the top k samples generated by the model is correct. If you ask an LLM for one answer and it's right, that's pass@1. If you ask it for 100 versions of the same function and at least one works, that's pass@100.

Researchers typically track pass@1 to see how reliable a model is on the first try. For example, early versions of Codex had a pass@1 score around 28.8%, while newer iterations like GPT-4 Turbo have pushed that number toward 89.2%. However, a high pass@100 score but a low pass@1 score suggests a model that is "lucky" but not consistently competent. It can find the right answer if given enough tries, but it lacks the precision to get it right the first time.

| Benchmark | Focus | Scale/Size | Key Strength | Main Weakness |

|---|---|---|---|---|

| HumanEval | Python Logic | 164 Problems | High functional rigor | Python only |

| MBPP | Basic Python | 974 Problems | Larger dataset | Higher data leakage |

| SWE-bench | Software Engineering | 2,294 Issues | Real-world complexity | Very slow to run |

| CodeContests | Competitive Coding | Algorithmic | High difficulty | Less practical utility |

The Battle Against Data Leakage

One of the biggest problems in AI training is "memorization." If a benchmark uses problems that already exist on GitHub, the LLM might have seen the answer during its training phase. It's not solving the problem; it's just recalling a memory. This is called data leakage.

To fight this, the creators of HumanEval hand-crafted the problems. Instead of scraping the web, they wrote new tasks from scratch. Analysis shows that only about 0.7% of these problems overlap with standard GitHub corpora. Compare that to MBPP (Mostly Basic Python Problems), where leakage is estimated to be over 12%. When a model scores high on HumanEval, you can be much more confident it's actually "thinking" through the logic rather than just reciting a cached snippet of code.

Where HumanEval Falls Short

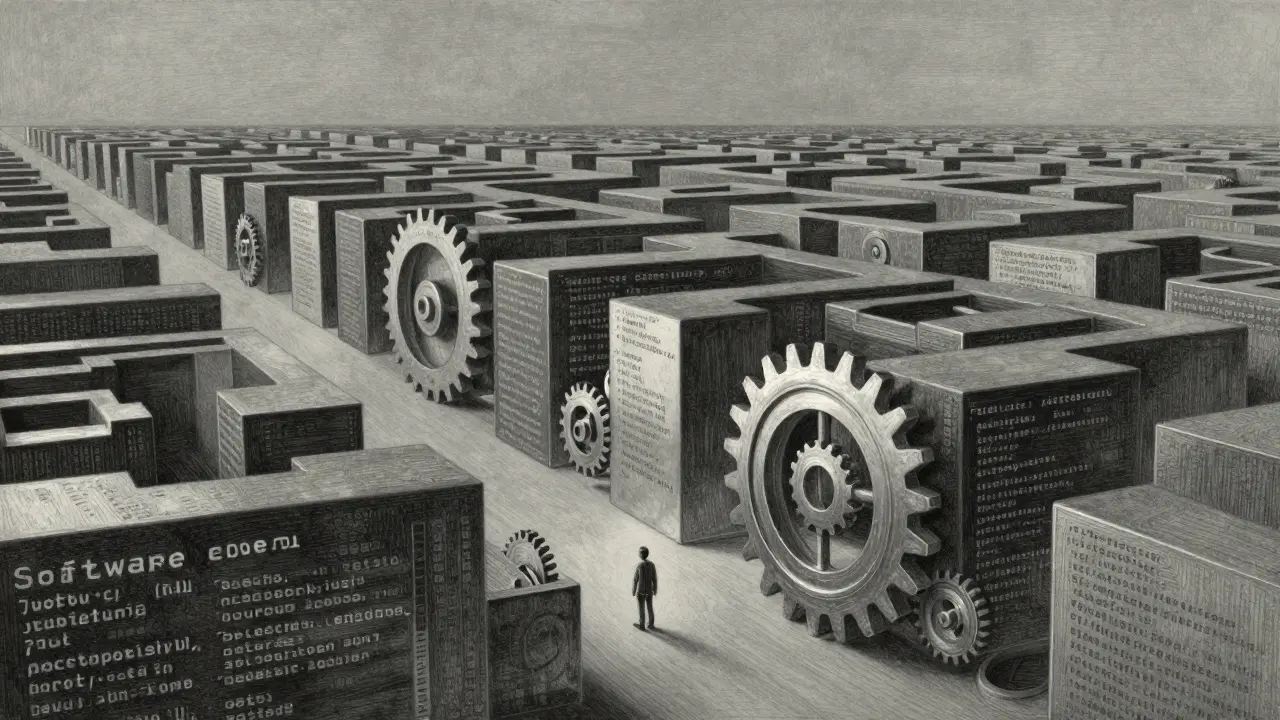

Despite its dominance, HumanEval isn't a perfect mirror of a developer's job. Most of the tasks are small, isolated functions. In the real world, software engineering is about navigating massive codebases, understanding architectural constraints, and integrating different files. You can't solve a bug in a 100,000-line project by writing one perfect 10-line function.

This gap has led to the creation of tools like SWE-bench, which asks models to resolve actual GitHub issues. While HumanEval takes about 1.2 seconds to evaluate a problem, SWE-bench can take 47 minutes because it requires the model to interact with a full environment. There is also the issue of "benchmark overfitting." Some models are fine-tuned specifically to ace HumanEval, resulting in nearly 99% scores, but when they are given a slightly different version of the same problem, their performance plummets to 52%.

Improving the Standard: EvalPlus and Beyond

Because the original HumanEval test suites were sometimes too lenient, researchers developed EvalPlus. This framework adds significantly more test cases-about 2.5x more than the original-to catch edge cases that the original benchmark missed. When models that previously scored 80% were put through EvalPlus, their scores often dropped by 15 to 22 percentage points. It turns out a lot of those "correct" answers were actually just lucky guesses that happened to pass the few tests provided.

We are also seeing the rise of multimodal benchmarks. HumanEval-V introduces visual context, testing if an AI can write code based on an image or a UI mockup. Early data shows a performance gap here; models are slightly less capable when they have to process visual information alongside logic, proving that coding is as much about understanding the context as it is about the syntax.

How to Use These Benchmarks in Practice

If you're a researcher or a developer wanting to test your own model, the workflow is fairly straightforward. You'll need Python 3.7+ and the official evaluation scripts. Depending on whether you use a local model or a commercial API, costs can range from essentially free to around $20 per full run. The biggest hurdles usually aren't the code, but API rate limits and environment configuration.

For most people, the best way to use this data is as a screening tool. If a model scores below 50% on pass@1, it's likely to produce more bugs than helpful suggestions in your IDE. If it's above 80%, it's generally reliable for boilerplate and logic, but you still need to be the lead architect who reviews the final output. The goal isn't to find a model that replaces the programmer, but one that reduces the time-to-solution.

What is the difference between pass@1 and pass@100?

pass@1 measures the probability that the very first code snippet the model generates is correct. pass@100 means the model generated 100 different versions of the code, and at least one of them passed all the tests. High pass@100 with low pass@1 suggests a model that can find the answer eventually but isn't precise.

Is HumanEval only for Python?

The original HumanEval is exclusively Python. However, variants like HumanEval-XL have expanded the benchmark to cover 8 different programming languages to provide a more comprehensive view of a model's multilingual coding ability.

Why is data leakage a problem in code benchmarks?

Data leakage happens when the test problems were present in the model's training data (e.g., from GitHub). If the model has already "seen" the answer, it's simply memorizing and repeating code rather than demonstrating actual problem-solving ability.

Does a high HumanEval score mean a model is better for real-world work?

Not necessarily. HumanEval tests small, isolated functions. Real-world work involves large codebases and system integration. While a high score is a good sign of basic logic, it doesn't guarantee the model can handle complex software architecture or project-wide dependencies.

What is EvalPlus and why does it matter?

EvalPlus is a framework that expands the test cases for HumanEval. It catches more bugs by adding a massive amount of extra unit tests, revealing that some models were "cheating" or getting lucky with the original, smaller test sets.

Next Steps for LLM Testing

If you've mastered the basic benchmarks, the next move is to look into custom internal benchmarks. Most companies now use HumanEval for initial screening but then build their own tests using their actual company codebase. This is the only way to know if a model understands your specific naming conventions, internal libraries, and architectural patterns.

For those struggling with model reliability, focus on few-shot prompting. Providing the model with 2-3 examples of a correctly solved problem in the style of HumanEval can often boost a model's pass@1 score significantly, bridging the gap between a raw model and a truly helpful coding assistant.