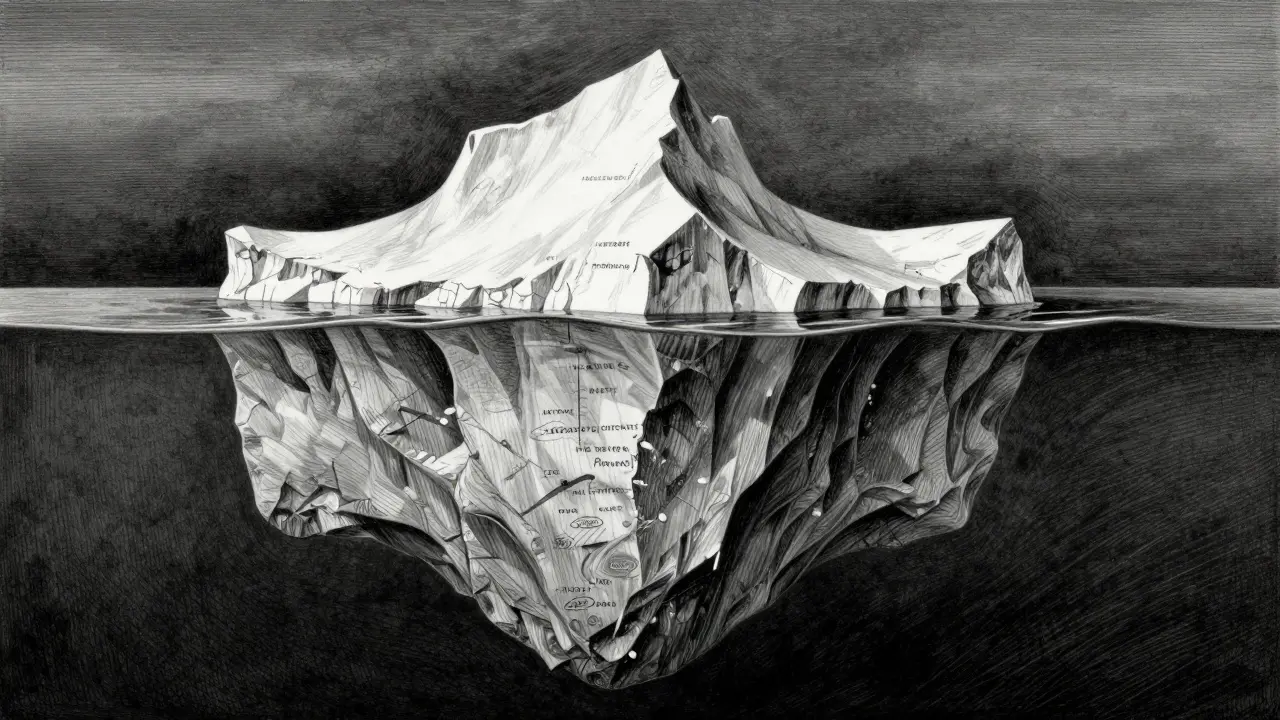

Domain-Specific RAG: Building Compliant AI for Healthcare, Finance, and Legal

Explore how domain-specific RAG builds compliant AI for healthcare, finance, and legal sectors. Learn about architecture, benchmarks, and overcoming implementation challenges.