Imagine asking your database a question in plain English-like "Show me last quarter’s sales by region, but only for customers who bought more than $500"-and getting back the exact results without writing a single line of SQL. That’s not science fiction anymore. It’s Natural Language to Schema (NL2Schema), and it’s changing how businesses interact with their data.

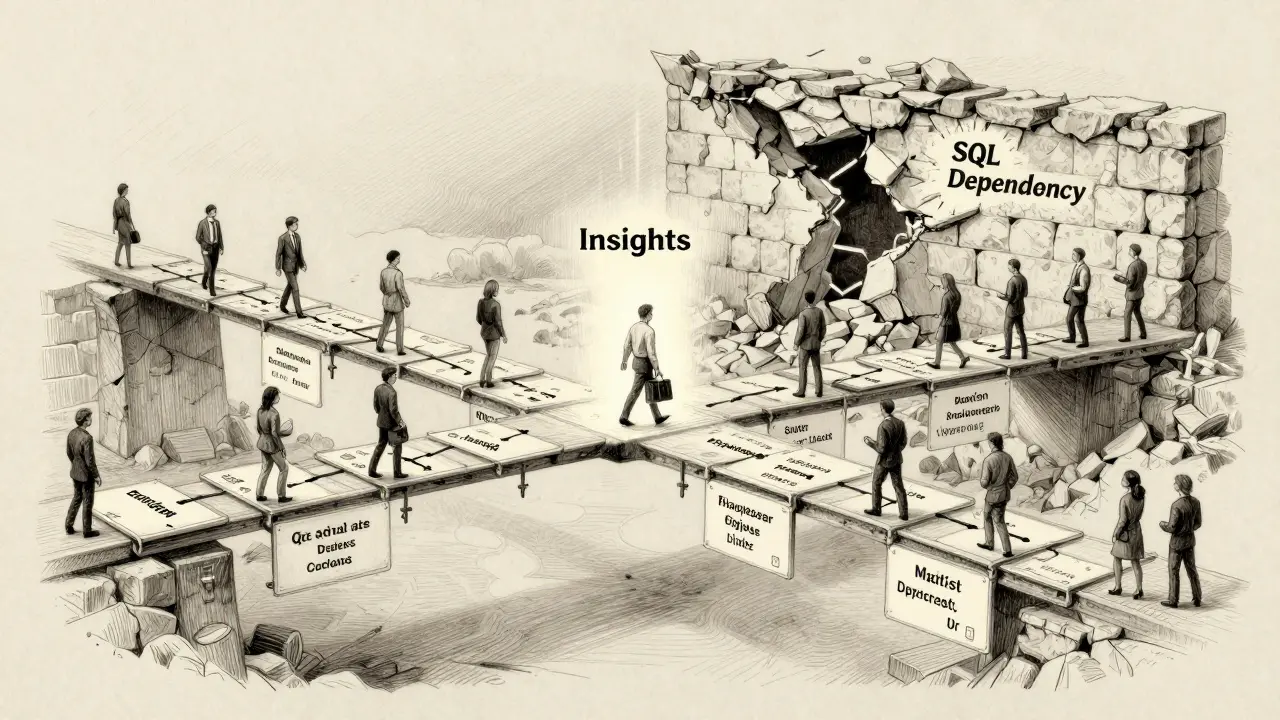

For years, only data engineers and analysts with SQL skills could pull meaningful insights from databases. Everyone else relied on them to run reports, which meant delays, bottlenecks, and frustration. Now, tools powered by large language models (LLMs) let non-technical users speak to databases like they’re talking to a colleague. But it doesn’t just listen-it needs to understand the structure behind the data. That’s where schema and ER diagrams come in.

What Exactly Is NL2Schema?

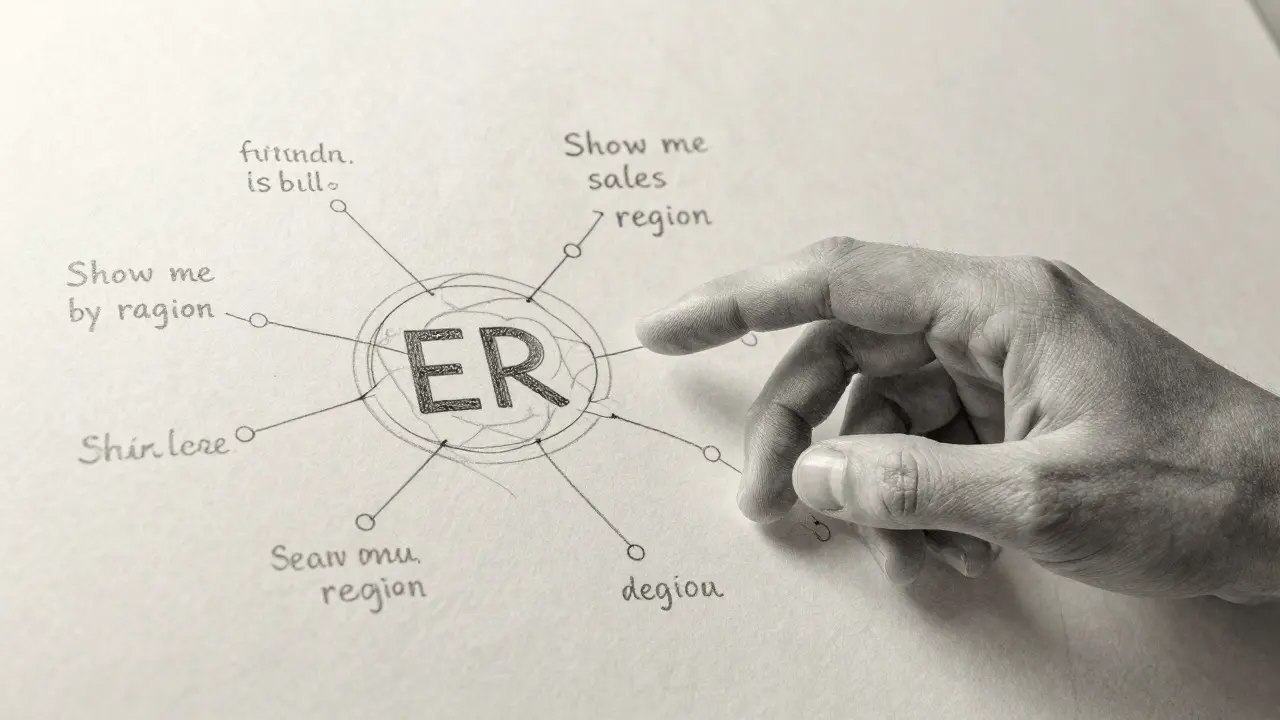

NL2Schema isn’t just about turning words into SQL. It’s about making the database’s structure visible and usable through natural language. At its core, it’s a two-step process: first, the system must understand the schema-the blueprint of tables, columns, keys, and relationships. Second, it must map your spoken question to that structure accurately.

Think of it like giving directions to someone who’s never been to your house. You can’t just say, "Go to the blue building." You need to describe the street, the neighbor’s red mailbox, the driveway with the cracked concrete. That’s the schema: the details that make location meaningful. Without it, even the smartest AI will guess wrong.

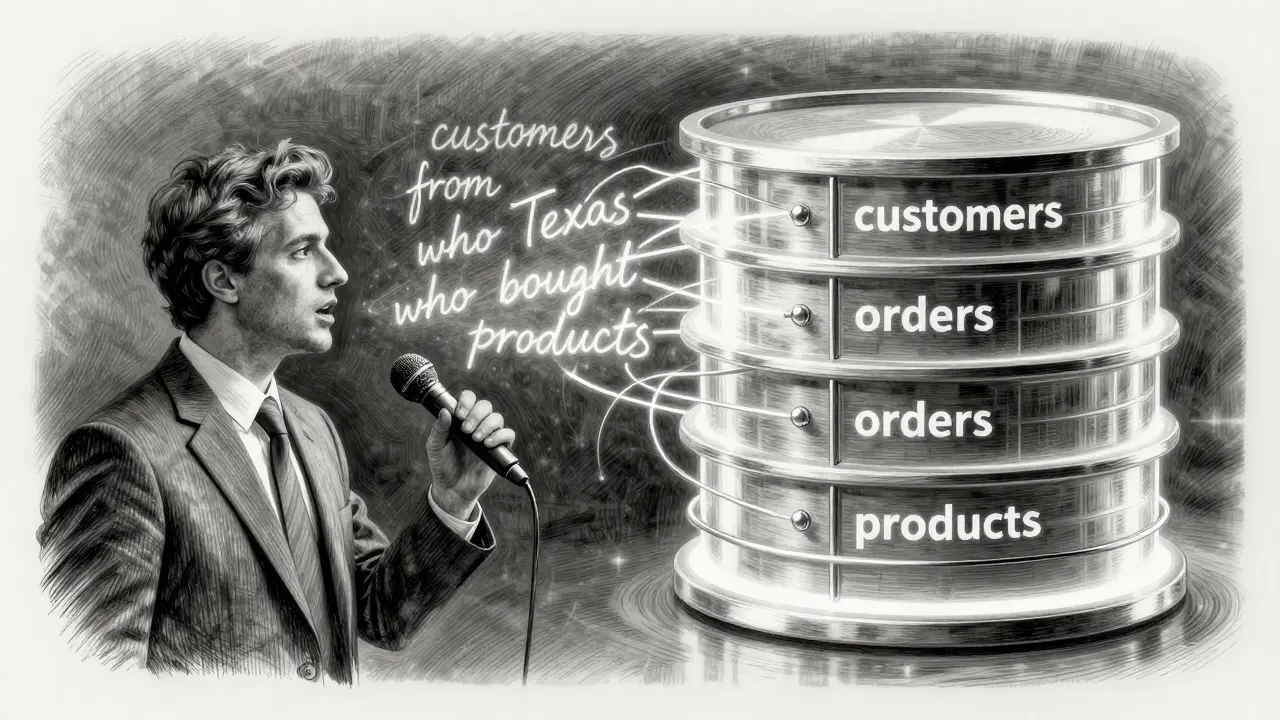

Modern NL2Schema systems use real-time schema extraction to pull in table names, column types, foreign keys, and even business rules. For example, if you have a table called orders with columns like order_id, customer_id, and order_date, the system doesn’t just see those labels-it knows customer_id links to a customers table, and order_date is a date field, not text.

Why ER Diagrams Matter More Than You Think

ER diagrams (Entity-Relationship diagrams) are the visual version of a database schema. They show tables as boxes, columns inside them, and lines connecting related tables. These aren’t just pretty pictures-they’re the roadmap for how data flows.

Here’s where most tools fail: they treat schema as a static list. But real databases have relationships-many-to-one, many-to-many, optional joins. If you ask, "What products did customers from Texas buy last month?", the system needs to know that customers connects to orders, which connects to products. If the ER diagram isn’t properly mapped, the query might join the wrong tables or miss a critical link entirely.

According to a 2024 study from the Allen Institute for AI, accuracy drops by 0.8% for every additional table in a schema. A database with 150 tables? That’s a 120% accuracy hit without proper ER awareness. That’s why leading tools now integrate directly with data catalogs like Alation and Collibra. These systems don’t just store schema-they track changes, document relationships, and even suggest how tables should be joined based on usage patterns.

How It Works: The Architecture Behind the Magic

There’s no single way to do NL2Schema, but most enterprise systems follow a similar pattern:

- Data Preprocessing - Your question is cleaned, normalized, and tagged. "Last quarter" becomes a date range: January 1 to March 31, 2026.

- Schema Extraction - The system pulls the latest schema from the database or data catalog. This includes column names, data types, primary/foreign keys, and any custom annotations (like "customer_status = active").

- Query Generation - Using the schema and your intent, the LLM builds a SQL query. It doesn’t just guess-it references the structure like a human would.

- Validation & Safety - Generated SQL is checked for SQL injection, unintended joins, or PII exposure. Over 98% of enterprise tools now auto-sandbox risky queries.

- Execution & Feedback - The query runs. If the result doesn’t match what you expected, the system learns. Did you mean "revenue" instead of "sales"? That gets logged as a hint for next time.

Tools like Oracle’s Schema Intelligence Engine and Microsoft’s Azure OpenAI Schema-Aware Prompting now auto-generate ER diagrams from natural language. Ask for a diagram of "how orders relate to inventory," and the system builds it in seconds. That’s a game-changer for teams that don’t have dedicated DBAs.

Real-World Accuracy: What Works and What Doesn’t

Not all NL2Schema tools are created equal. Benchmarks show big gaps:

| Tool | Single-Table Queries | Multi-Table Joins (3+ tables) | Complex Aggregations | Schema Drift Handling |

|---|---|---|---|---|

| Oracle Database 23c + Schema Intelligence | 92.1% | 84.5% | 81.3% | Yes (auto-updates) |

| Microsoft Azure OpenAI | 89.7% | 76.8% | 74.2% | Partial (manual refresh) |

| Chat2DB (Open Source) | 78.4% | 65.1% | 59.7% | No |

| AWS Bedrock + RAG | 87.9% | 80.2% | 78.6% | Yes (real-time catalog sync) |

Simple queries? Most tools nail them. Filtering by date, summing sales, listing customers-accuracy exceeds 85%. But complexity kills. Queries involving recursive logic, window functions, or cross-database joins? Accuracy plummets below 50%. A 2024 Gartner report found that 68% of enterprise databases have over 200 tables. Most LLMs can’t process that much context at once. That’s why tools using Retrieval-Augmented Generation (RAG)-like AWS and Oracle-pull schema chunks on-demand instead of dumping everything into the prompt.

Who’s Actually Using This-and How

It’s not just tech teams. The biggest users are business analysts, marketers, and operations managers who need data fast:

- A retail chain in Texas used NL2Schema to let store managers ask, "Which items are low in stock but have high returns?"-cutting inventory review time from 3 days to 15 minutes.

- A healthcare provider let clinical analysts query patient records with natural language like, "Show me patients diagnosed with hypertension who haven’t had a follow-up in 6 months". They reduced report generation time by 65%.

- A financial services firm let compliance officers ask, "List all transactions over $10K from accounts opened in 2025"-without needing to write SQL or wait for IT.

But success isn’t automatic. Companies that saw the biggest gains had three things in common:

- They documented their schema with clear business terms (e.g., "customer_status" wasn’t just a flag-it was "active," "inactive," or "pending verification").

- They integrated their data catalog so the AI always saw the latest ER diagram.

- They trained the system with real examples: "Here’s how we asked last month, here’s what we got, here’s what we meant."

Where It Still Falls Short

Don’t get fooled by the hype. NL2Schema has serious limits:

- Ambiguous terms - "Show me last year’s numbers"-last year when? Fiscal year? Calendar year? The system might pick the wrong one.

- Business jargon - If your company calls "revenue" "income," "sales," and "gross," the AI won’t know unless you tell it.

- Schema drift - If a column gets renamed or a table is merged, most tools won’t notice until you manually update them.

- Security blind spots - Even with validation, poorly designed prompts can leak data. One company accidentally let users ask, "What’s my manager’s salary?"-and got an answer.

Experts like Dr. H.V. Jagadish warn that current systems treat schema as metadata, not as a semantic foundation. They can’t reason about relational algebra the way a human database designer can. That’s why the most successful teams use NL2Schema as a tool, not a replacement for understanding data.

Getting Started: What You Need to Know

If you’re thinking of trying NL2Schema:

- Start small - Pick one high-impact table (like orders or customers) and build prompts around it.

- Document your schema - Add comments to column names: "customer_id: links to customers table, primary key".

- Use a data catalog - Even free tools like Apache Atlas or Metabase can help tag relationships.

- Test with real users - Ask them to phrase questions naturally. You’ll be surprised what they say.

- Always validate - Never run generated SQL without checking it. Use a staging environment first.

Enterprise tools cost $45K-$120K/year. But open-source options like Chat2DB or the NL2SQL Handbook on GitHub can get you started for free. Just know: you’ll spend more time tuning prompts than you think. Microsoft’s docs say it takes 40-60 hours for a data engineer to get a production-ready setup.

The Future: What’s Coming in 2025-2026

The next wave of NL2Schema isn’t about better prompts-it’s about smarter schemas.

- By 2026, 70% of tools will auto-correct schema changes based on user feedback.

- ER diagrams will be generated from natural language descriptions-no more Visio files.

- LLMs will start learning your business logic: "If a customer has two orders in one day, treat them as a bundle."

But the real breakthrough? When the system doesn’t just answer your question-it asks you to clarify. "You said ‘last month.’ Do you mean calendar month or billing cycle?" That’s when NL2Schema stops being a shortcut and becomes a true collaborator.

Can I use NL2Schema with my existing database?

Yes, most NL2Schema tools connect via standard interfaces like JDBC, ODBC, or REST APIs. They work with Oracle, SQL Server, PostgreSQL, MySQL, and cloud databases like BigQuery and Aurora. The key is whether your database has a well-documented schema. If tables are poorly named or relationships aren’t defined, accuracy will suffer. Start by cleaning up your data model before adding NL2Schema.

Do I need to know SQL to use NL2Schema?

No. One of the main goals of NL2Schema is to let non-technical users access data without SQL. However, understanding basic concepts like tables, columns, and joins helps you ask better questions. If you know what "JOIN" means, you’ll phrase queries more clearly-and get better results.

Is NL2Schema secure?

Security depends on implementation. Enterprise tools automatically block SQL injection, filter PII, and sandbox queries. But if you’re using a basic open-source tool without validation, you’re at risk. Always ensure your system checks generated SQL before execution. Many organizations now require a human-in-the-loop review for any query that accesses sensitive data.

How accurate is NL2Schema really?

For simple queries-single tables, basic filters, aggregations-accuracy is often above 85%. For complex joins, recursive logic, or cross-database queries, accuracy drops to 50-65%. Tools with real-time schema awareness (like AWS and Oracle) perform significantly better than those that just paste schema into prompts. Accuracy also depends on how well your data is documented. Poorly labeled columns can cut accuracy in half.

What’s the difference between NL2SQL and NL2Schema?

NL2SQL only converts natural language into SQL queries. NL2Schema goes further-it understands the database structure (tables, columns, keys, relationships) and uses that knowledge to build better, more accurate queries. Think of NL2SQL as a translator, and NL2Schema as a translator who also knows the local laws and customs. The latter avoids mistakes the former wouldn’t even notice.

NL2Schema isn’t magic. It’s a bridge. And like any bridge, it only works if both sides are built solidly. The data must be clean. The schema must be clear. The prompts must be thoughtful. When those pieces come together, you don’t just get faster answers-you get better decisions.