Running a massive Large Language Model (LLM) like LLaMA-70B used to mean renting five A100 GPUs. That is expensive, energy-intensive, and frankly, impractical for most businesses or developers working on a budget. But the landscape has shifted dramatically since 2024. You no longer need a supercomputer to run powerful AI. Thanks to model compression, you can shrink these behemoths down to fit on a laptop, a smartphone, or a modest cloud server without losing much of their intelligence.

Model compression isn't just about saving money; it's about accessibility. It allows us to deploy AI at the edge-on your phone, in your car, or in IoT devices-where sending data back to a central server is too slow or violates privacy laws. The core challenge? Reducing the size and computational load while keeping the model smart enough to be useful. If you compress it too much, the AI starts hallucinating or giving nonsensical answers. If you don't compress it enough, you're still paying for those expensive GPUs.

There are three main pillars of this technology: quantization, pruning, and knowledge distillation. Each works differently, each has trade-offs, and choosing the right one depends entirely on what you are trying to build. Let’s break them down so you can decide which approach fits your project.

Quantization: Trading Precision for Speed

Think of quantization as lowering the resolution of an image. You might not notice the difference between a 4K photo and a 1080p photo on a small screen, but the file size drops significantly. In machine learning, we do something similar with numbers.

Most LLMs are trained using 32-bit floating-point numbers (FP32). These numbers are precise but take up a lot of memory. Quantization converts these weights into smaller formats, like 8-bit integers (INT8) or even 4-bit integers. This doesn't change the architecture of the model; it just changes how the computer stores and calculates the values.

| Bit Width | Compression Ratio | Accuracy Impact | Hardware Support |

|---|---|---|---|

| FP16 / BF16 | 2x | Negligible | Standard GPUs |

| INT8 | 4x | Moderate | Modern CPUs/GPUs |

| INT4 | 8x | Noticeable without calibration | Specialized Accelerators |

The beauty of quantization is that it often requires zero retraining. Post-Training Quantization (PTQ) lets you take a pre-trained model, convert the weights, and run it immediately. Tools like Hugging Face's Optimum or NVIDIA's TensorRT-LLM make this incredibly easy. For many applications, INT8 quantization provides a sweet spot: you get double the inference speed and half the memory usage, with less than 5% drop in accuracy.

However, going below 4 bits gets tricky. While Apple’s research showed they could achieve 3-bit precision with negligible perplexity degradation, doing this usually requires specialized calibration techniques. Without proper calibration, the model loses its ability to handle nuanced language tasks. Also, not all hardware supports low-bit operations equally. You’ll get the best performance on modern accelerators like NVIDIA Ampere+ GPUs or Apple Neural Engines.

Pruning: Removing the Fat

If quantization is about simplifying the numbers, pruning is about removing unnecessary parts of the model itself. Imagine a brain where some neurons fire constantly while others never do. Pruning cuts out the inactive neurons.

In technical terms, pruning removes weights, neurons, or entire layers from the neural network. There are two main types:

- Unstructured Pruning: Removes individual weights. This creates a "sparse" model where many values are zero. It’s flexible but hard to speed up because most hardware isn’t optimized to skip over zeros efficiently.

- Structured Pruning: Removes entire rows, columns, or layers. This keeps the model dense but smaller. It’s easier to accelerate on standard hardware but offers less flexibility in compression ratios.

Recent methods like FLAP (Filter-Level Adaptive Pruning) have improved this process by using adaptive structure search. This avoids the need for extensive fine-tuning after pruning, which was historically a major pain point. However, pruning is still more complex than quantization. You often need to fine-tune the model after pruning to recover performance. One developer on Reddit noted that aggressive pruning required "2-3 weeks of fine-tuning" to restore the model’s capabilities.

Pruning shines when you have specific hardware constraints. For example, if you’re deploying on an edge device with limited memory bandwidth, a pruned model can be significantly faster because there’s simply less data to move around. But beware: aggressive pruning beyond 60% sparsity can lead to catastrophic performance degradation, especially in general-purpose tasks.

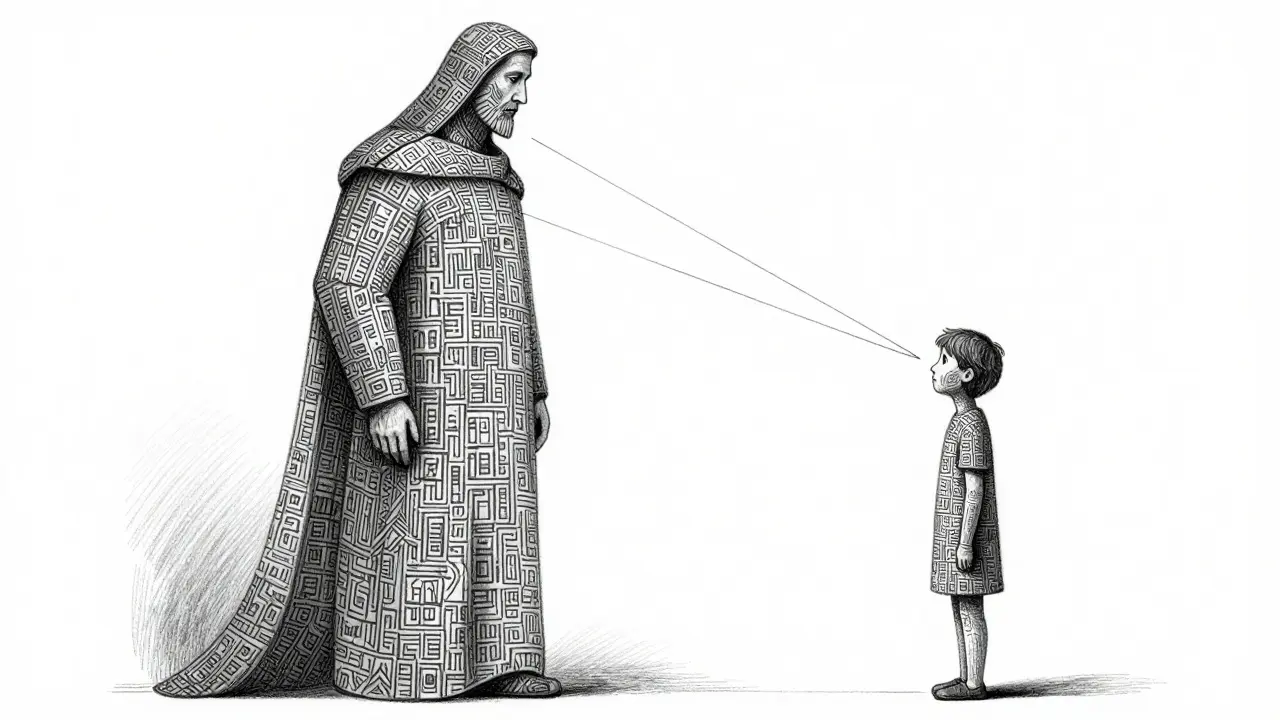

Knowledge Distillation: Teaching a Smaller Student

Knowledge distillation is perhaps the most intuitive concept. You take a huge, powerful "teacher" model and train a smaller "student" model to mimic its behavior. The student learns not just from the raw data, but from the teacher’s predictions and confidence levels.

This method preserves task performance better than pruning or quantization alone. For instance, TinyBERT achieved a 7.5x compression ratio while maintaining 96.8% of BERT’s performance on benchmark tests. Similarly, Microsoft’s Phi-3 family uses distillation to create highly capable small models.

The catch? Distillation is resource-intensive. You need access to the large teacher model during training, and you need a substantial dataset. Training a distilled model like TinyLlama took three weeks on 64 A100 GPUs. This makes distillation less suitable for rapid deployment scenarios where you just want to shrink an existing model quickly. It’s a long-term investment strategy rather than a quick fix.

Distillation is also unique because it allows you to transfer knowledge across different architectures. You can distill a transformer-based LLM into a simpler RNN or even a linear model, depending on your constraints. This versatility makes it invaluable for specialized applications where the output format matters more than the internal mechanics.

Choosing the Right Technique

So, which one should you use? It depends on your primary constraint.

- Latency-Sensitive Applications: If you’re building a real-time chat interface or voice assistant, choose quantization. It provides consistent speedups with minimal code changes.

- Memory-Constrained Edge Devices: If you’re deploying on a smartphone or embedded system with strict memory limits, consider pruning combined with quantization. Structured pruning reduces the model size significantly.

- High-Accuracy Requirements: If you cannot afford any loss in quality, such as in medical diagnosis or legal analysis, use knowledge distillation. It maintains the highest fidelity to the original model’s reasoning.

Hybrid approaches are becoming the norm. Many production systems use quantization for immediate gains and then apply light pruning to remove outliers. Apple’s recent work demonstrates that combining training-free compression with careful calibration can achieve 50-60% sparsity and 4-bit precision simultaneously.

Pitfalls and Ethical Considerations

Compression isn't magic. It introduces risks. Professor Yoav Goldberg warns that aggressive compression can increase vulnerability to adversarial attacks. When you simplify a model, you might inadvertently remove the features that make it robust against malicious inputs.

There’s also the issue of bias. Compressed models sometimes amplify biases present in the original data. Stanford’s FairPrune algorithm addresses this by prioritizing the retention of features crucial for minority groups. As you compress, you must evaluate not just overall accuracy, but fairness across different demographics. The EU AI Act, effective February 2026, now requires documentation of any model modifications that affect performance characteristics, making compliance a critical part of your workflow.

Evaluation metrics matter too. Perplexity, the traditional metric for language models, often fails to capture subtle capability losses in compressed models. Frameworks like LLM-KICK and LLMCBench provide more comprehensive evaluations, testing knowledge-intensive tasks and real-world scenarios. Don’t rely solely on benchmark scores; test your compressed model on your actual use cases.

Future Directions

The field is moving toward adaptive compression. Instead of statically compressing a model, future systems will dynamically adjust complexity based on real-time conditions. Microsoft’s Dynamic Sparse Training shows promise here, allowing models to become more efficient only when needed.

Energy efficiency is another major driver. Google’s 2024 study showed a 4.7x reduction in energy consumption for quantized models. As climate concerns grow, the environmental impact of AI will push companies toward lighter models.

Ultimately, model compression democratizes AI. It moves us away from a world where only giants can afford intelligence to one where developers everywhere can build powerful, private, and efficient applications. Whether you’re optimizing for speed, size, or accuracy, there’s a technique that fits your needs. Start with quantization-it’s the easiest win-and evolve from there.

What is the biggest risk of model compression?

The biggest risk is catastrophic performance degradation. If you compress too aggressively, the model loses its ability to generalize, leading to hallucinations, incorrect reasoning, or increased vulnerability to adversarial attacks. Always validate compressed models on task-specific benchmarks, not just generic perplexity scores.

Can I compress an LLM without retraining?

Yes, through Post-Training Quantization (PTQ). PTQ converts weights to lower precision (like INT8) without requiring additional training data or compute. However, for higher compression ratios or pruning, some form of fine-tuning is usually necessary to recover lost accuracy.

Which compression method is best for mobile devices?

A combination of quantization and structured pruning is typically best for mobile. Quantization reduces memory footprint and speeds up inference, while structured pruning removes redundant parameters. Apple’s Neural Engine and Android’s NNAPI support these operations efficiently.

Does quantization reduce the quality of the AI's responses?

At 8-bit precision (INT8), the quality loss is usually negligible (<5%). At 4-bit or lower, you may see noticeable drops in complex reasoning tasks unless you use advanced calibration techniques. For most conversational AI applications, 8-bit quantization is the industry standard for balancing quality and efficiency.

How does knowledge distillation differ from pruning?

Pruning removes parts of the existing model architecture. Knowledge distillation trains a completely new, smaller model to mimic the behavior of a larger "teacher" model. Distillation generally preserves higher accuracy but requires significant training resources and time, whereas pruning is applied directly to the existing model.