Imagine trying to teach a child to read by only showing them medical journals. They might learn a few specific words, but they would struggle to understand a basic conversation. Now, imagine if that child already spent years reading every book in a massive public library before ever seeing a medical journal. They would pick up the specialized terminology almost instantly because they already understand the structure, grammar, and nuance of language. This is exactly how Transfer Learning is a machine learning technique where a model trained on a massive, general dataset applies that knowledge to a new, specific task. It is the secret sauce that turned Natural Language Processing from a niche academic exercise into the powerhouse behind tools like ChatGPT.

The Quick Wins of Transfer Learning

- Slashing Training Time: No need to start from scratch; you build on top of existing "intelligence."

- Data Efficiency: You can get a high-performing model with a few hundred labeled examples instead of millions.

- Hardware Optimization: Only a few companies can afford to pretrain a giant model, but anyone can fine-tune one on a standard GPU.

- Better Generalization: Models understand context and sarcasm better because they've seen a trillion words across different genres.

The Great Shift: From Scratch to Pretraining

For a long time, if you wanted a model to classify sentiment in movie reviews, you trained a model on movie reviews. If you wanted to extract names from legal documents, you trained a new model on legal documents. This was a nightmare for two reasons: it required massive amounts of labeled data (which is expensive to create) and the models were "brittle," meaning they crashed the moment they saw a word they hadn't encountered during training.

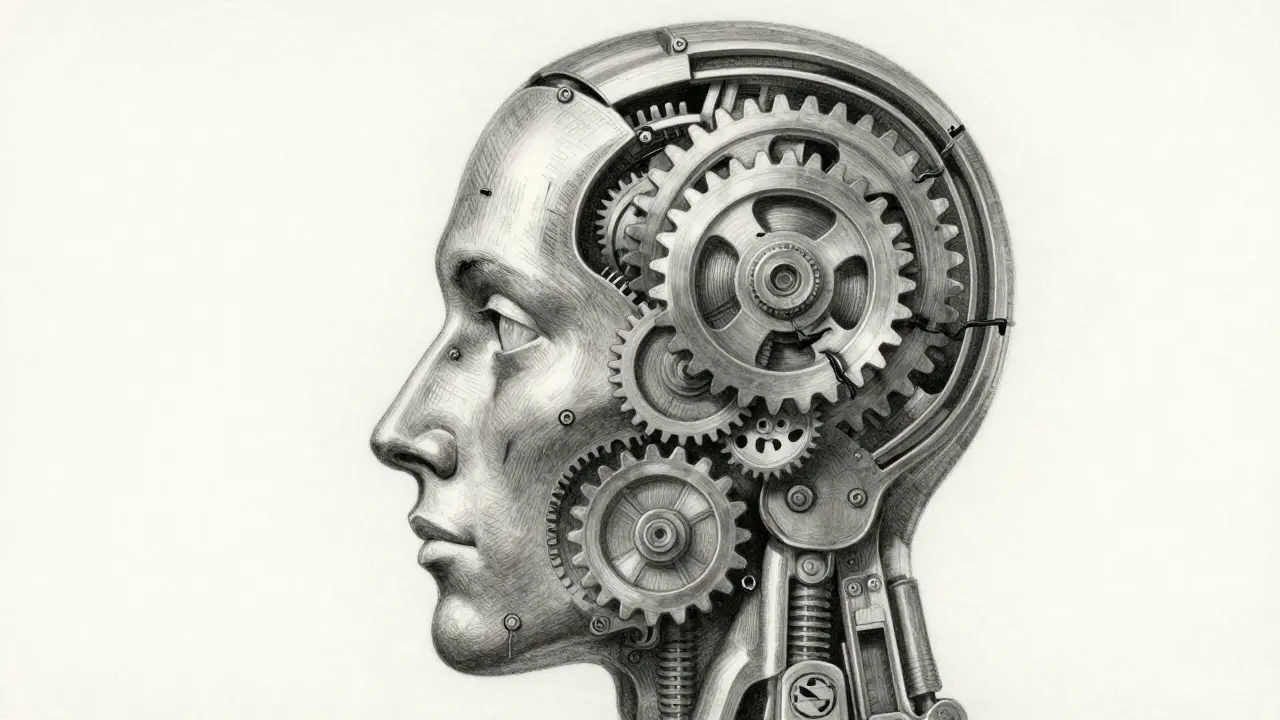

The game changed with the introduction of the Transformer architecture. Instead of reading text linearly, Transformers use attention mechanisms to look at every word in a sentence simultaneously. This paved the way for BERT (Bidirectional Encoder Representations from Transformers), which fundamentally altered the NLP trajectory. BERT wasn't trained to do one thing; it was trained to understand language itself using Masked Language Modeling (MLM). By hiding words in a sentence and forcing the model to guess them, BERT learned how words relate to each other in a bidirectional way-looking both left and right of a word to grasp its meaning.

The Two-Step Dance: Pretraining and Fine-Tuning

To understand how this works in practice, think of it as a two-stage process. First comes pretraining. This is the "heavy lifting" phase where a model is fed an astronomical amount of text-think Wikipedia, Common Crawl, and digitized libraries. During this stage, the model learns the basics: how verbs work, the relationship between "apple" and "fruit," and how to structure a coherent paragraph. This stage involves tokenization (breaking text into chunks) and vectorization (turning those chunks into numbers).

Once the model has this general-purpose understanding, we move to fine-tuning. This is where we take that "educated" model and give it a specific job. If we want the model to detect spam emails, we show it a smaller, labeled dataset of spam and non-spam messages. We usually freeze the majority of the model's layers-keeping the general knowledge intact-and only train the final layer to map that knowledge to a "Spam" or "Not Spam" label. This is why a company doesn't need a supercomputer to build a custom NLP tool; they just need a pre-trained base and a few thousand examples of their own data.

| Model | Core Innovation | Best Use Case | Key Attribute |

|---|---|---|---|

| BERT | Bidirectional Context | Sentiment Analysis / NER | Masked Language Modeling |

| GPT-3 | Massive Scale (175B params) | Content Generation | Autoregressive Pretraining |

| T5 | Text-to-Text Framework | Translation / Summarization | Unified Task Architecture |

| ALBERT | Parameter Sharing | Mobile/Edge Deployment | Reduced Memory Footprint |

Scaling Up: From BERT to GPT and Beyond

While BERT was a breakthrough, it was mostly an "encoder," meaning it was great at understanding text but not so great at writing it. Then came the GPT (Generative Pre-trained Transformer) series. OpenAI shifted the focus toward a decoder-only architecture, training the models to predict the next word in a sequence. As they scaled the number of parameters-GPT-3 reached 175 billion-something magical happened: zero-shot learning. The models became so good at transfer learning that they could perform tasks they were never explicitly fine-tuned for, simply by being given a few instructions in a prompt.

Other variations emerged to solve specific problems. For instance, XLNet improved on BERT by using permutation-based training, which allows it to capture bidirectional context without the "masking" problem. Meanwhile, T5 (Text-to-Text Transfer Transformer) treated every NLP task as a text-to-text problem. Whether it was translating English to French or summarizing a long article, the input was text and the output was text. This unified approach made it incredibly versatile for developers who didn't want to change their model architecture for every different task.

Real-World Applications of the Breakthrough

The shift toward transfer learning has moved AI out of the lab and into our pockets. If you've used a chatbot that actually understands your intent rather than just matching keywords, you're seeing transfer learning in action. Virtual assistants now use pre-trained models to handle the complexity of human language and then apply a thin layer of specialization to perform a specific action, like adding an event to your calendar.

In the medical field, researchers use models pretrained on general medical literature and fine-tune them on specific patient records to identify rare diseases. Because labeled medical data is scarce and expensive to produce, starting with a model that already "knows" what a protein or a symptom is makes the process viable. The same applies to legal tech, where models pretrained on general English are fine-tuned on case law to summarize thousands of pages of discovery documents in seconds.

The Trade-offs and Roadblocks

It sounds like a magic bullet, but transfer learning has its pitfalls. The most pressing is data quality. If a model is pretrained on a massive scrape of the internet, it inherits the internet's biases, prejudices, and inaccuracies. When you fine-tune a biased model, those biases don't disappear; they just get repackaged into your specific application. This is why "alignment" and safety tuning have become such massive parts of the LLM lifecycle.

There is also the issue of catastrophic forgetting. This happens when a model is fine-tuned so aggressively on a new task that it "forgets" the general knowledge it learned during pretraining. For example, a model trained to be a hyper-specific legal assistant might lose its ability to communicate in plain, conversational English. Finding the right balance-often by keeping a low learning rate or freezing specific layers-is a constant struggle for machine learning engineers.

What is the difference between pretraining and fine-tuning?

Pretraining is the initial phase where a model learns general language patterns from a massive, unlabeled dataset (like the whole web). Fine-tuning is the second phase, where the model is trained on a much smaller, labeled dataset to perform a specific task, such as classifying emails or summarizing medical reports.

Can I use transfer learning if I don't have a lot of data?

Yes, that is one of the primary benefits. Because the model already understands the structure of language from pretraining, you only need a small amount of task-specific data to "guide" the model toward the correct output, rather than teaching it how to read from scratch.

Why is BERT considered bidirectional?

Unlike older models that read text from left to right or right to left, BERT looks at the entire sequence of words simultaneously. By using a masking technique, it learns to predict a word based on the context provided by both the words that come before it and the words that come after it.

What are the computational requirements for fine-tuning?

Fine-tuning is significantly cheaper than pretraining. While pretraining requires thousands of GPUs for weeks, fine-tuning can often be done on a single high-end consumer GPU (like an NVIDIA RTX series) or a small cloud instance in a few hours, depending on the model size.

Does transfer learning always improve model performance?

Usually, yes, but not always. If the pretraining data is too different from the target task (e.g., pretraining on English poetry and fine-tuning on Java code), the model may experience "negative transfer," where the previous knowledge actually hinders the new task.

Next Steps for Implementation

If you're looking to apply this to your own project, start by identifying a pre-trained model that fits your needs. For understanding and classification, BERT or its smaller cousin ALBERT are great choices. For generation or complex reasoning, look toward the GPT or T5 families.

Begin with feature-based transfer learning, where you use the pre-trained model as a fixed feature extractor. If the results aren't precise enough, move to full fine-tuning, where you update the weights of the entire model. Just remember to monitor your validation loss closely to avoid the catastrophic forgetting mentioned earlier. If you hit a wall with hardware, explore Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA, which allow you to tune a model by only updating a tiny fraction of its parameters.