We used to think bigger models meant smarter AI. That era is over. The real breakthrough in Generative AI is systems that create text, code, and images using advanced neural networks isn't about parameter count anymore-it's about how those parameters are organized. As of May 2026, the industry has shifted from monolithic 'brain-in-a-box' designs to sophisticated system-level intelligence frameworks. This change allows companies to build AI that is faster, cheaper, and significantly more reliable than its predecessors.

The shift didn't happen overnight. By late 2025, major players like AWS launched specialized architectural lenses at re:Invent, while firms like Zaha Hadid Architects and Foster + Partners fully integrated these new structures into their workflows. The result? A 3.2x increase in inference speed and a 47% drop in energy consumption compared to 2023 standards. If you're building or buying AI solutions today, understanding these architectural shifts is no longer optional-it's the difference between a prototype and a production-ready system.

The Death of the Monolith: Why Structure Matters More Than Size

For years, the strategy was simple: throw more data and compute at a single, massive model. But this approach hit a wall around 500 billion parameters. Beyond that point, costs skyrocketed, and performance gains diminished. Enter System-Level Intelligence is an architectural approach that integrates multiple specialized components to solve complex tasks rather than relying on a single monolithic model. Instead of one giant brain trying to do everything, modern architectures use a team of specialists working together.

This modular approach solves the "brittle handoff" problem that plagued earlier AI systems. When Component A passes data to Component B, errors used to cascade uncontrollably. Today’s hybrid architectures balance modularity for engineering scalability with deep integration for robustness. According to Professor Stuart Russell of UC Berkeley, future AI systems require this specific balance to overcome previous limitations. The value proposition is clear: we can transform existing models into practical intelligence through superior systems engineering, rather than waiting for statistical miracles from larger models.

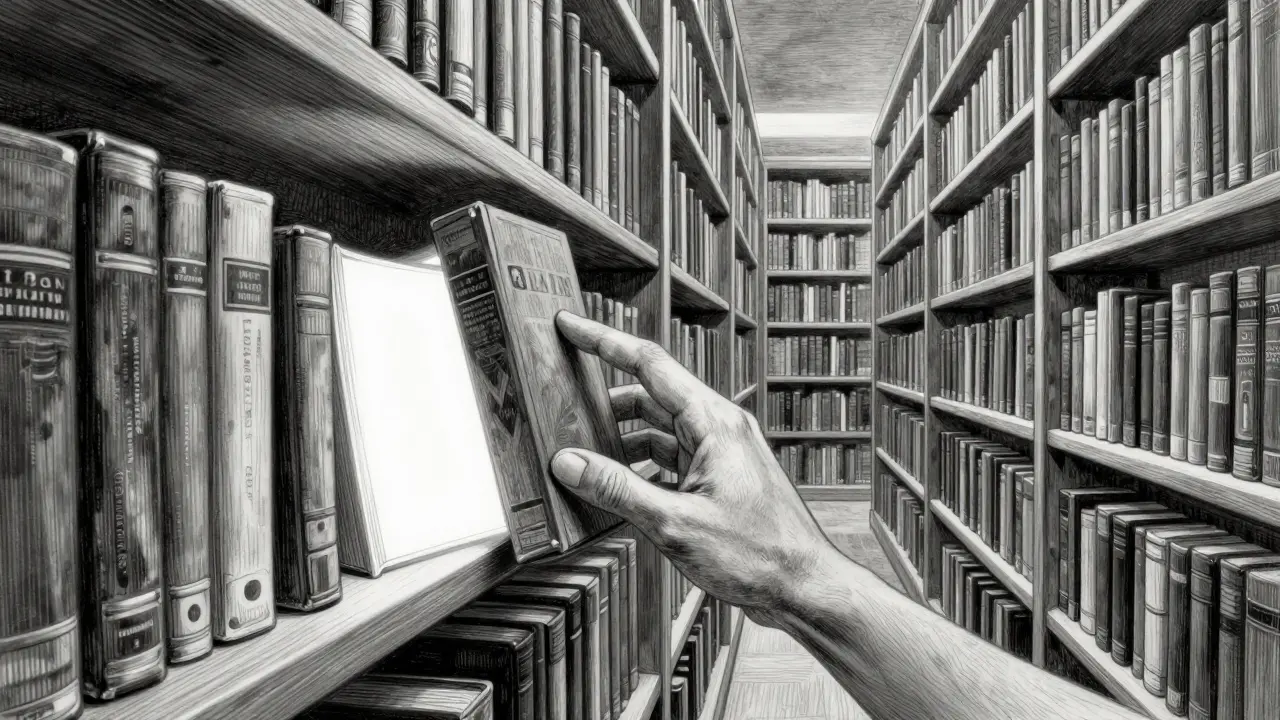

Mixture-of-Experts: Doing More with Less

One of the most impactful innovations is Mixture-of-Experts (MoE) is a neural network architecture where only a subset of parameters is activated for each input token, reducing computational cost. In a traditional dense model, every single parameter fires for every word generated. In an MoE architecture, the system activates only 3-5% of parameters per token. Imagine a library where instead of reading every book to answer a question, you only open the three most relevant ones.

This decoupling of model scale from inference costs is revolutionary. You can have a trillion-parameter model but pay for the compute of a much smaller one. AWS documentation notes that MoE architectures demonstrate a 72% cost reduction in inference compared to dense models of equivalent capability. The trade-off? Training and deployment become 15-20% more complex. You need better routing mechanisms to ensure the right "expert" handles the right task. But for enterprises scaling AI workloads, the efficiency gain is too significant to ignore.

Verifiable Reasoning: Trusting the Output

AI hallucinations aren't just annoying; they're dangerous in fields like healthcare or legal tech. Early systems relied on emergent Chain-of-Thought behavior-hoping the model would "think aloud" correctly. Modern architectures move beyond hope to verification. Verifiable Reasoning Architectures is frameworks that include explicit process supervision to inspect and validate logical steps during AI generation now provide explicit, inspectable frameworks with process supervision.

Ken Huang’s analysis highlights that these frameworks reduce logical errors by 60-80% on complex tasks. How? By adding a layer of oversight that checks the reasoning process, not just the final output. It’s like having a proofreader who understands logic, not just grammar. This is critical for autonomous systems, such as the call centers and knowledge worker co-pilots described in the AWS Generative AI Lens scenarios. Without verifiable reasoning, automation remains risky. With it, AI becomes a reliable partner.

Efficient Attention: Solving the Speed Bottleneck

Attention mechanisms allow AI to focus on relevant parts of a long document. However, traditional attention scales quadratically-O(n^2)-meaning doubling the context length quadruples the compute required. This made processing long sequences prohibitively expensive. New efficient attention mechanisms have reduced this complexity to O(n log n) or better.

This improvement enables real-time processing of massive datasets. State Space Models, like Mamba, demonstrate 2.4x faster inference on long sequences, though they sacrifice about 12% accuracy on complex language tasks compared to standard Transformers. For applications requiring rapid analysis of logs, financial records, or medical histories, this speed boost is transformative. Most production systems still rely on Transformer variants (87% as of Q3 2025), but the trend toward linear attention is accelerating as hardware constraints tighten.

| Architecture Type | Key Benefit | Main Trade-off | Best Use Case |

|---|---|---|---|

| Mixture-of-Experts (MoE) | 72% lower inference cost | 15-20% higher training complexity | Large-scale enterprise deployments |

| Verifiable Reasoning | 60-80% fewer logical errors | Increased latency due to validation steps | Critical decision-making (legal, medical) |

| State Space Models (Mamba) | 2.4x faster long-sequence inference | 12% lower accuracy on complex tasks | Real-time stream processing |

| Hierarchical Reasoning (HRM) | 38% better causal reasoning | Pre-production stage; limited tools | Scientific discovery and planning |

Industry Adoption: From Theory to Practice

The theory is solid, but how does it play out in the real world? Architecture firms are leading the charge in visualizing these benefits. Firms like BIG (Bjarke Ingels Group) report a 40% reduction in conceptual design time using generative AI. However, integration remains a hurdle. Only 32% of firms achieve seamless integration with existing BIM (Building Information Modeling) workflows, according to the Chaos Blog survey from October 2025.

In software development, the pattern is similar. Netflix engineers reported that AI-assisted architecture tools reduced scaling prediction errors by 31%, but it took six months of customization to fit their microservices ecosystem. Amazon developers saw a 27% improvement in database sharding efficiency, though initial false-positive rates required manual validation. These stories highlight a crucial truth: architectural innovation requires cultural and operational adaptation, not just technical upgrades.

Implementation Challenges and Skill Gaps

Adopting these new architectures isn't plug-and-play. The learning curve for system-level AI architecture has dropped, but proficiency still takes 8-12 weeks of focused training. LinkedIn’s November 2025 analysis of over 12,000 job postings shows a demand for a hybrid skill set: traditional software architecture knowledge (100%), AI model understanding (87%), and system integration expertise (76%).

Common challenges include:

- Legacy Integration: 63% of enterprises struggle to connect new AI modules with old systems.

- Complexity Management: 52% find managing multi-component systems overwhelming.

- Consistency: Ensuring modular components behave consistently across different tasks is difficult for 47% of teams.

Solutions often involve gradual component replacement and comprehensive monitoring. Start with predefined patterns, like the eight scenarios in the AWS Generative AI Lens, and expand from there. Don't try to boil the ocean.

Future Trajectory: Hybrid and Agentic Systems

Where is this going? Gartner predicts that by 2027, 65% of enterprise AI systems will use hybrid architectures combining multiple specialized components. We’re moving toward systems that orchestrate multiple architectures, creating a loop where verification, training, and inference are unified. Google’s Pathways update, scheduled for Q2 2026, aims to reduce architectural complexity by 40%, while Meta’s open-sourced Modular Reasoning Framework signals a push toward standardized components.

The biggest opportunity lies in agentic architectures. AWS highlights eight scenarios for autonomous systems that analysts predict will drive 45% of new enterprise implementations in 2026. These agents don't just generate content; they plan, reflect, and operate autonomously. The critical question has shifted from "What is the next model breakthrough?" to "How can we design system architectures that make practical AI achievable with existing models?" The answer lies in structure, not just scale.

What is the main advantage of Mixture-of-Experts (MoE) architecture?

MoE allows a model to have a massive number of parameters (trillions) but only activates a small fraction (3-5%) for any given task. This results in a 72% reduction in inference costs compared to dense models, making large-scale AI economically viable.

How do verifiable reasoning architectures reduce AI errors?

They add explicit process supervision layers that inspect the logical steps taken by the AI during generation. This reduces logical errors by 60-80% on complex tasks, ensuring outputs are not just plausible but logically sound.

Why are monolithic AI models becoming obsolete?

Monolithic models face diminishing returns beyond 500 billion parameters, with skyrocketing costs and brittle performance. System-level architectures offer better scalability, reliability, and efficiency by integrating specialized components rather than relying on a single massive model.

What skills are needed to implement modern AI architectures?

You need a mix of traditional software architecture knowledge, deep understanding of AI models, and system integration expertise. Proficiency typically requires 8-12 weeks of focused training, focusing on modular design and component orchestration.

Are State Space Models like Mamba ready for production?

Yes, but with caveats. They offer 2.4x faster inference on long sequences, making them ideal for real-time processing. However, they currently sacrifice about 12% accuracy on complex language understanding tasks compared to standard Transformers, so they are best used in specific, high-speed contexts.