When you hear about AI models like GPT-4 or Llama 3 generating human-like text, you might think it’s all about the math - the layers, the attention mechanisms, the billions of parameters. But here’s the truth: the real magic happens long before training even starts. The quality of the data fed into these models decides whether they’ll be brilliant, biased, or broken. At web scale, this isn’t just about gathering text. It’s about sifting through petabytes of messy, duplicated, illegal, and low-quality content to find the tiny fraction that actually teaches an AI to think.

Where Does All That Data Come From?

Most large language models are trained on data scraped from the public web. The biggest source? Common Crawl is a non-profit archive that has been crawling and storing web pages since 2012, now holding over 25 billion pages. Think of it as the internet’s attic - full of blogs, forums, news articles, product pages, and yes, spam. For a model like GPT-4, this means processing around 13 trillion tokens of raw text. That’s more than all the books ever published, multiplied by ten. But Common Crawl isn’t the only player. Commercial services like Bright Data and Apify are growing fast because they offer curated, ethically sourced datasets. These services filter out paywalled content, remove personal data under GDPR, and even tag sources by domain quality. For companies building specialized models - say, for legal or medical use - these datasets cut weeks off preprocessing time. Then there’s synthetic data. DeepSeek-R1 pioneered a method where an LLM generates its own training examples - like math problems with step-by-step solutions. It then uses rejection sampling to keep only the ones that pass quality checks. This isn’t just a workaround for scarce data; it’s becoming essential for tasks where real-world examples are rare or too sensitive to use.The Cleaning Pipeline: A Multi-Stage Filter

Raw web data is garbage. Like, 90%+ garbage. So the cleaning pipeline isn’t one step - it’s a gauntlet. Here’s how it actually works in practice:- URL-based filtering - Remove obvious junk: forums with 1000+ replies of "lol", adult sites, malware pages. This alone cuts 40-60% of the data.

- Document quality scoring - Use lightweight models to score each text block. Look for things like sentence length, punctuation density, and repetition. If a paragraph repeats the same phrase 5 times? Gone. This removes another 25-35%.

- Deduplication - This is where things get brutal. Duplicate content isn’t just annoying - it causes "double descent," where the model overfits to repeated patterns. Simhash with 64-bit fingerprints is the go-to tool. One engineer on Kaggle reported cutting 50TB of data from 14 days to 9 hours using this. Deduplication at the paragraph level (not whole documents) improved downstream performance by 7.3%, but tripled processing time.

- Safety and toxicity filtering - Remove hate speech, threats, illegal content. But here’s the catch: over-filtering kills nuance. A survey of 127 ML engineers found 68% struggle with false positives - especially in medical and legal texts. One model flagged "abortion" as toxic 22% of the time. The solution? Human-in-the-loop review for edge cases.

- Copyright filtering - This is the slowest, most expensive part. Legal teams demand removal of content from books, journals, and proprietary websites. It consumes 35-40% of pipeline resources but adds barely 1% to performance. Some teams are now testing watermarking detection to avoid reprocessing.

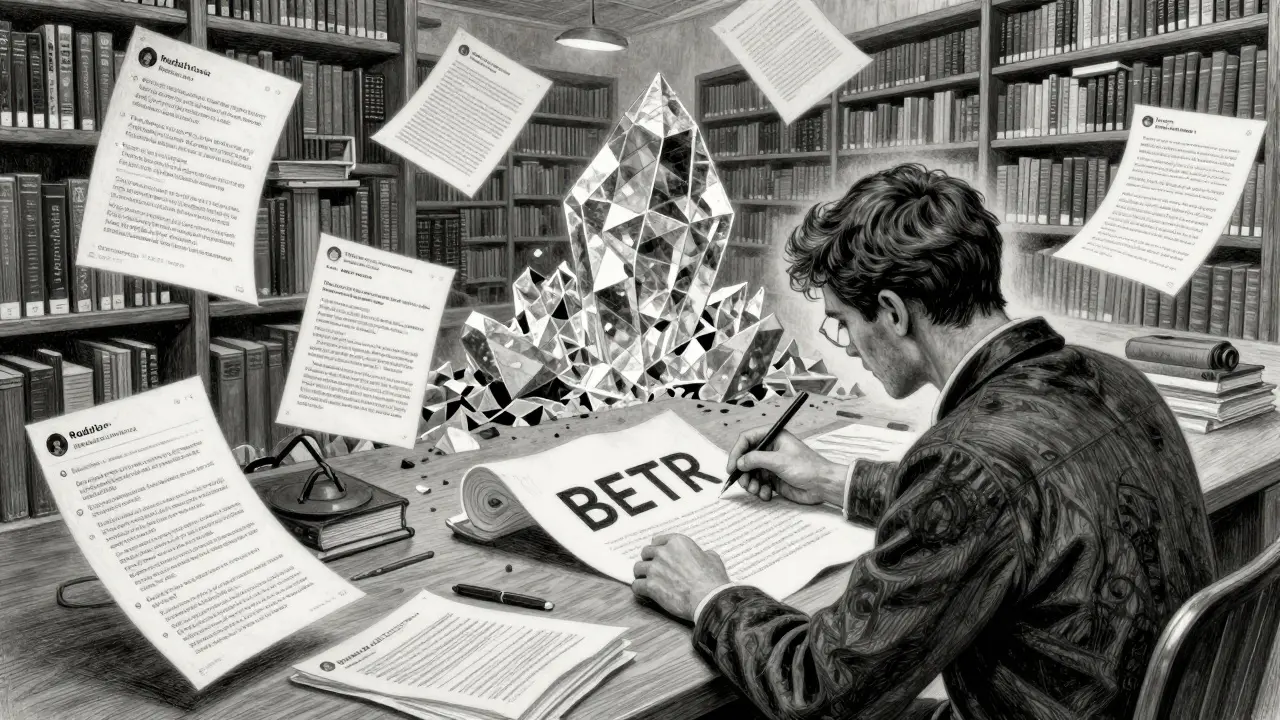

Why Quality Matters More Than Quantity

In 2020, everyone thought bigger data = better models. Then Apple dropped BETR (Benchmark-Targeted Ranking) in November 2024. Their research showed something shocking: pretraining on carefully selected data improved performance by 2.1x compared to raw web data. Not because it was bigger - because it was smarter. BETR doesn’t just filter out bad content. It actively selects documents that resemble the kinds of questions used in benchmark tests. If your model needs to answer science questions, you feed it more scientific papers, not more Reddit threads. The result? A model that learns faster, needs less compute, and performs better on real tasks. Dr. Percy Liang from Stanford put it bluntly: "The quality of pretraining data has become the primary bottleneck for LLM advancement, surpassing architecture innovations in importance." Even Meta AI’s September 2024 findings showed diminishing returns past 30-40% data retention for models over 70B parameters. More data doesn’t help if it’s noisy. In fact, it hurts.

The Hidden Costs: Time, Money, and Legal Risk

Building a web-scale pipeline isn’t just technical - it’s a logistical nightmare. Most teams spend 3-6 months just setting up the data pipeline before training begins. Here’s what that looks like:- You need 50-100 dedicated servers to crawl billions of pages without getting blocked.

- Distributed systems like Apache Spark and Flink are mandatory. You can’t process petabytes on a laptop.

- Language detection has to cover 100+ languages. One misclassified Spanish article can pollute your English training set.

- GDPR requests alone consume 15% of pipeline resources. If someone asks to be forgotten, you have to scrub their data from every copy of your corpus - even if it’s already been trained on.

The Future: Smarter, Not Bigger

The industry is shifting. The era of "throw everything at the wall" is over. Here’s where things are headed:- Targeted pretraining - Instead of training on the whole web, models will be trained on datasets built for specific tasks: legal reasoning, medical diagnosis, code generation. Gartner predicts 80% of enterprise models will use this by 2027.

- Synthetic data dominance - By 2026, 65% of enterprise LLMs will use synthetic data, up from 25% in 2024. It’s not a backup - it’s becoming the primary source for high-stakes domains.

- Privacy-aware collection - Princeton’s "Min-K% Prob" method shows you can detect if a model memorized your private data. That means future data pipelines will need built-in privacy checks, not just after-the-fact scrubbing.

- Data-centric AI - McKinsey found that 57% of organizations now spend more on data prep than model development. That’s a flip from 2022, when most poured money into bigger neural nets.

What You Can Learn From This

If you’re building or using an LLM, here’s the bottom line:- Don’t assume more data = better results. Quality beats quantity every time.

- Deduplication isn’t optional. Use simhash or similar fingerprinting - it’s the single biggest performance booster.

- Filtering is a science. Test your toxicity and copyright filters on real examples. False positives are more damaging than false negatives.

- Start small. Build a 10GB pipeline first. Learn how your filters behave before scaling to 10TB.

- Track your retention rate. If you’re keeping more than 30% of raw data, you’re probably not filtering enough.

How much data is needed to train a large language model?

State-of-the-art models like GPT-4 are trained on approximately 13 trillion tokens. This comes from raw web data totaling hundreds of terabytes - but after cleaning, only about 10-25% remains usable. For smaller, domain-specific models, 50-200TB of raw data is typical, with final training sets around 10-50TB after filtering.

What’s the biggest challenge in cleaning web data for LLMs?

The biggest challenge is balancing thorough filtering with preserving useful content. Toxicity filters often mislabel medical or legal text as harmful. Copyright filters remove valuable sources like academic papers. Deduplication at scale is computationally expensive. And with GDPR and the EU AI Act, legal compliance adds another layer of complexity that can consume up to 40% of pipeline resources.

Can synthetic data replace real web data for LLM training?

For general language understanding, no - real web data still provides essential diversity. But for specialized tasks like math reasoning, code generation, or legal analysis, synthetic data is not just a supplement - it’s becoming the primary source. Techniques like DeepSeek-R1’s rejection sampling generate high-quality, verified examples that outperform scraped data in targeted benchmarks.

Why does deduplication improve model performance?

Duplicate content causes "double descent," where the model overfits to repeated patterns instead of learning general rules. If the same paragraph appears 1000 times, the model learns to regurgitate it rather than understand context. Deduplication - especially at the paragraph level - forces the model to generalize, improving performance on unseen tasks by up to 7.3% according to Dolma dataset research.

Is it worth filtering for copyright?

It’s not about performance - it’s about risk. Copyright filtering adds little to model quality but protects against lawsuits. With legal actions already underway, companies that skip this step risk having to reprocess entire datasets. For enterprise use, it’s a necessary cost, not an optional step.

Next Steps If You’re Starting Out

If you’re building your first LLM pipeline:- Start with Common Crawl’s public datasets - they’re free and well-documented.

- Use a simple simhash implementation (like datasketch) for deduplication.

- Build a 1GB test pipeline first. Measure how much you lose at each filter stage.

- Don’t try to filter everything. Focus on toxicity and duplicates first.

- Track your retention rate. If you’re keeping over 30%, you’re not filtering enough.