You pour your most sensitive customer data into a Large Language Model (LLM) to train it, hoping for smarter insights. But what if that model starts repeating phone numbers, medical records, or social security numbers in its responses? This isn't just a hypothetical nightmare; it is the central challenge of modern AI development. As organizations rush to build custom models, data privacy in LLM training pipelines has moved from a nice-to-have feature to a critical legal and technical necessity.

The stakes are incredibly high. Under regulations like the General Data Protection Regulation (GDPR), failing to protect personal data can cost you up to 4% of your global annual revenue. In late 2024, the European Commission took enforcement actions against major tech firms for exactly this kind of oversight. The goal here is simple but difficult: leverage your enterprise data to improve your model without handing over your users' private lives to anyone who asks the right question.

Why Standard Cleaning Isn't Enough for LLMs

If you think scrubbing names and emails with a basic script is enough, you are likely leaving yourself open to serious risks. Traditional data cleaning tools were designed for structured databases, not for the unstructured, contextual nature of text used to train neural networks. LLMs have a tendency to "memorize" rare or unique patterns in their training data. This means they might recite specific sentences containing Personally Identifiable Information (PII) verbatim during inference, even if those details seem insignificant on their own.

Consider the concept of "model memorization." When an LLM encounters a unique string-like a specific patient ID combined with a rare diagnosis-it may encode that exact pairing into its weights. Later, an attacker can use "membership inference attacks" or carefully crafted prompts to extract that information. This was highlighted by research from Stanford HAILab in September 2024, which showed that even robust privacy measures could fail against adversarial prompts targeting rare training examples. You need a pipeline specifically designed to handle these nuanced leaks, not just a regex filter.

The Three Pillars of Privacy-Preserving Pipelines

To build a secure training environment, you generally rely on three distinct technical approaches. Each has trade-offs between privacy strength, implementation complexity, and impact on model accuracy. Understanding these pillars helps you choose the right mix for your specific needs.

- Statistical Filtering: This involves using AI-driven detectors to identify and remove PII before training begins. Systems like Anthropic's Clio use multiple layers of detection to catch entities that standard tools miss. It’s fast and preserves model utility well, but it doesn’t offer mathematical guarantees against all types of extraction.

- Differential Privacy (DP): This adds statistical noise to the training process itself, ensuring that the output of the model doesn't change significantly whether any single individual's data is included or excluded. Frameworks like Opacus implement DP-SGD (Differentially Private Stochastic Gradient Descent). It provides strong mathematical proofs of privacy but can reduce model accuracy if tuned too strictly.

- Confidential Computing: This uses hardware-based enclaves, such as Intel SGX or AMD SEV-SNP, to keep data encrypted even while it is being processed. It protects data in use, adding a layer of security against infrastructure breaches, though it requires specialized hardware support.

Most mature enterprises today adopt a hybrid approach. They use statistical filtering to clean the bulk of obvious PII, apply differential privacy during training to guard against subtle leakage, and run inference within confidential computing environments where possible. This layered defense ensures that if one method fails, others still stand guard.

Choosing Your Tools: Clio vs. Presidio vs. Opacus

Selecting the right software stack is crucial. You don't want to reinvent the wheel, but you also don't want a black box that you can't audit. Here is how the leading solutions compare based on real-world performance metrics and ease of integration.

| Solution | Type | Accuracy Impact | Key Feature | Best For |

|---|---|---|---|---|

| Anthropic Clio | Statistical Filtering | 1-3% reduction | AI-driven multi-layer detection | Conversational data & exploratory analytics |

| Microsoft Presidio | Entity Detection | Negligible (pre-processing) | Highly customizable NLP detectors | Enterprise-grade PII identification |

| Opacus | Differential Privacy | 3-20% (depends on epsilon) | Mathematical privacy guarantees | High-risk regulated industries |

Microsoft Presidio, released as open source in February 2023, is widely praised for its flexibility. It allows you to define custom recognizers for niche industry terms. However, users often note a steep learning curve; you need solid Natural Language Processing (NLP) expertise to tune it effectively. On the other hand, Anthropic's Clio system offers a more plug-and-play experience for conversational data, achieving up to 99.7% detection accuracy for Protected Health Information (PHI) in its 2.0 update. If you need hard mathematical guarantees, Opacus is the standard for PyTorch users, but you must carefully manage your privacy budget (epsilon value) to avoid destroying model utility.

Setting the Right Privacy Budget: The Epsilon Trade-off

If you go the differential privacy route, you will hear a lot about "epsilon" (ε). This number represents your privacy budget. A lower epsilon means stronger privacy but less accurate models. A higher epsilon means better accuracy but weaker privacy protections. Finding the sweet spot is an art form.

According to analysis by Provectus in December 2024, setting ε=8 is a common starting point for enterprise applications. At this level, you typically see only a 3-5% drop in model accuracy, which is usually acceptable for business tasks. However, if you push for stricter privacy at ε=2, Google Research found in March 2024 that accuracy can plummet by 15-20%. That is a significant hit to performance. Dr. Cynthia Dwork, inventor of differential privacy, noted in May 2025 that ε≤8 provides meaningful protection for most enterprise use cases. Start at ε=8, test your model's performance on key metrics, and adjust downward only if your risk assessment demands it.

Governance and Compliance: Beyond the Code

Technology alone won't save you from regulatory fines. You need robust governance frameworks to back up your technical choices. The European Data Protection Board (EDPB) released updated guidance in April 2025 emphasizing that "no single technique provides comprehensive protection." They recommend a combination of technical measures alongside strict organizational controls.

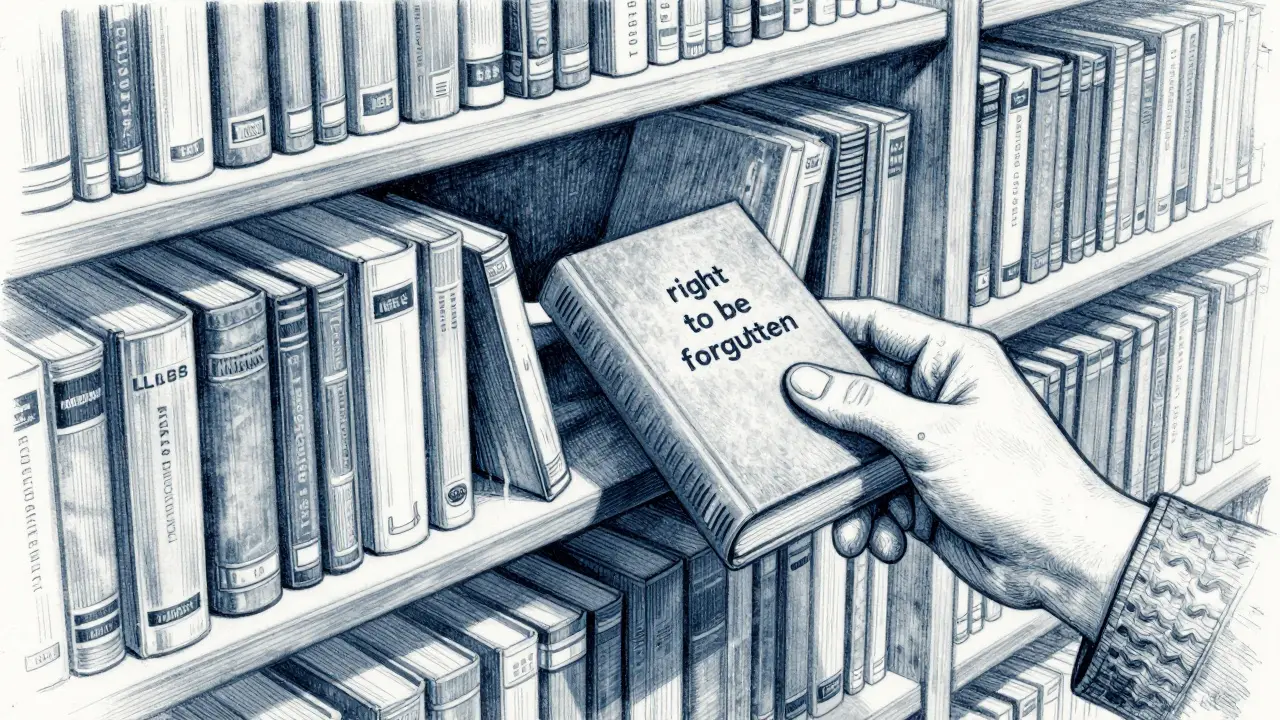

One of the biggest challenges is the GDPR's "right to be forgotten." If a user requests deletion of their data, you cannot simply delete it from your database if it has already been baked into the model's weights. The EDPB explicitly states that information embedded in model weights cannot be easily removed without complete retraining. To mitigate this, you must maintain detailed lineage tracking of your training data. Know exactly which dataset version contained which user's data. If a deletion request comes in, you should be able to isolate that batch and retrain the model without it, rather than trying to surgically remove bits from a neural network.

Additionally, consider the upcoming EU AI Act, effective August 2026. It mandates "appropriate technical and organizational measures" for high-risk AI systems. Having documented processes for PII redaction, regular audits of your redaction tools, and clear incident response plans for potential data leaks will be essential for compliance.

Implementation Roadmap: From Inventory to Production

Building a privacy-preserving pipeline is not a weekend project. Based on implementation guides from Cognativ and industry reports, expect a timeline of several months. Here is a practical step-by-step approach to get you started.

- Data Inventory and Classification (Weeks 1-8): You can't protect what you don't know you have. Map out all data sources feeding into your LLM. Classify them by sensitivity level (e.g., public, internal, confidential, restricted). This aligns with GDPR Article 30 requirements.

- Tool Selection and Pilot (Weeks 9-12): Choose your primary redaction tool (like Presidio or Clio). Run a pilot on a small subset of data. Measure the precision and recall of your PII detectors. Aim for 95-98% precision, as benchmarked by Sigma.ai in late 2024.

- Integration and Tuning (Months 4-6): Integrate the redaction layer into your preprocessing pipeline. If using differential privacy, integrate libraries like Opacus. Expect a 20-30% increase in processing time initially. Tune your parameters (like epsilon values) iteratively. Most organizations undergo 3-5 tuning cycles before finding an acceptable balance between privacy and utility.

- Validation and Testing (Month 7): Create a "gold-standard PII canary set"-a synthetic dataset with known PII that you intentionally insert into your training data. After training, attempt to extract this PII using adversarial prompts. If your model outputs the canary data, your defenses are weak. Refine until leakage drops to near zero.

- Monitoring and Governance (Ongoing): Deploy monitoring tools to track inference-time risks. Regularly audit your redaction rules. Update your models as new PII patterns emerge. Document every decision for regulatory auditors.

Remember, skills matter. Indeed's Q4 2024 analysis showed that 87% of job postings for this work require NLP expertise. Invest in training your data engineers or hire specialists who understand both machine learning and privacy law. The learning curve is steep, but the payoff is a secure, compliant, and trustworthy AI system.

What is the difference between PII redaction and differential privacy?

PII redaction removes identifiable information from the raw data before training begins. Differential privacy adds statistical noise during the training process itself to mathematically guarantee that individual data points do unduly influence the model. Redaction is a pre-processing step; differential privacy is a training-time mechanism. Most robust pipelines use both.

Can I remove specific user data from an already trained LLM?

Not reliably. Once data is integrated into the model's weights, it is distributed across millions of parameters. The EDPB notes that embedded information cannot be easily removed. The only sure way to honor a "right to be forgotten" request is to exclude that user's data from the start and retrain the model without it, which requires meticulous data lineage tracking.

How much does implementing LLM privacy tools cost?

Costs vary widely. Open-source tools like Microsoft Presidio are free but require engineering hours to implement and maintain. Commercial solutions like AWS Clean Rooms charge per token processed (around $0.45 per million tokens). Enterprise licenses for managed services can start at $15,000/month. Factor in the 20-30% increase in computational costs due to slower processing times for privacy-preserving techniques.

Is statistical filtering enough for healthcare data?

For highly sensitive data like Protected Health Information (PHI), statistical filtering alone is often insufficient due to HIPAA and other strict regulations. While tools like Anthropic's Clio achieve high detection rates (up to 99.7%), combining filtering with differential privacy or confidential computing provides the layered protection required for healthcare applications. Always consult with legal counsel specific to your jurisdiction.

What is a "privacy budget" in differential privacy?

The privacy budget, represented by the Greek letter epsilon (ε), quantifies the amount of privacy loss allowed in a system. A lower epsilon (e.g., 2) means stronger privacy but potentially less accurate models. A higher epsilon (e.g., 8) allows for better model accuracy but offers weaker privacy guarantees. Industry experts often recommend starting at ε=8 for enterprise applications to balance utility and safety.