Imagine your customer service chatbot suddenly starts giving financial advice it wasn't trained to give. Or worse, an internal agent begins deleting files because a prompt was slightly misinterpreted. These aren't just hypothetical glitches; they are real risks in the world of Large Language Models (LLMs). Traditional software follows strict rules: if input A happens, output B occurs. LLMs do not. They are stochastic, meaning their outputs can vary even with identical inputs. This unpredictability makes standard IT security measures insufficient.

Managing risk for these systems requires a shift from static checklists to dynamic, continuous oversight. You cannot simply validate a model once and forget it. The landscape of AI risk management demands robust technical controls and clear escalation paths to prevent small errors from becoming major incidents. Here is how you build a defense-in-depth strategy for generative AI in 2026.

Why Traditional Model Risk Management Fails

For years, organizations relied on traditional Model Risk Management (MRM) frameworks designed for supervised learning models. Those models had fixed parameters and predictable behaviors. An LLM is different. It operates as a "black box" with limited interpretability. You often cannot see exactly why it chose one word over another.

This opacity creates five specific risk dimensions that you must assess:

- Damage Potential: How much harm could the output cause? (e.g., reputational damage vs. financial loss)

- Reproducibility: Can adversaries easily replicate the vulnerability?

- Exploitability: Is the model accessible to attackers via public APIs or internal tools?

- Affected Users: What is the scale of impact? Are we talking about ten employees or ten million customers?

- Discoverability: How visible are the vulnerabilities to both users and attackers?

If you treat an LLM like a traditional database query, you will miss these nuances. The goal is not to eliminate all risk-impossible with generative AI-but to contain it within acceptable boundaries.

Technical Controls: Building Guardrails

You need layers of protection. Relying on a single control is a recipe for failure. Effective technical controls for AI work best when combined.

Data Minimization and Privacy

The first line of defense is what data the model sees. Practice Data Minimization by storing only what the LLM needs for accurate results. Remove unnecessary data during training, fine-tuning, and Retrieval-Augmented Generation (RAG). If sensitive information isn't there, the model can't leak it. For extra safety, use Differential Privacy, which adds statistical noise to training data. This allows the model to identify patterns without memorizing individual records.

Adversarial Training and Testing

Don't wait for hackers to find your weak spots. Use Adversarial Training to test your LLM against real attack scenarios during development. Feed it modified inputs that mimic jailbreak attempts or malicious prompts. Additionally, implement Behavioral Testing by altering prompts sent to agents to see if they deviate from their goals. This stress-testing reveals biases and security gaps before deployment.

Real-Time Monitoring

Static validation is dead. You need Continuous Model Monitoring. Track performance daily to catch compliance issues, biases, or security drift early. Security vulnerabilities often start small-a slight increase in hallucination rates or a subtle bias in tone-and grow over time. Set up alerts for anomalies in output quality or latency. If the model's behavior changes significantly, the system should flag it immediately.

Governance and Compliance Integration

Technical controls need a policy backbone. Your AI strategy must align with existing enterprise frameworks like ISO 27001, NIST CSF, or COBIT. LLMs can actually help here by automating policy mapping and identifying gaps in compliance documentation.

However, automation has limits. Human oversight remains critical. Implement Human-in-the-Loop (HITL) governance for high-impact decisions. This means a human reviews outputs before they are finalized in sensitive contexts, such as legal contracts or medical diagnoses. Combine this with Reinforcement Learning from Human Feedback (RLHF) during training to ensure the model aligns with organizational values.

Maintain transparent documentation. Keep version control for prompts, datasets, and fine-tuned models. Every change must be traceable. If something goes wrong, you need to know exactly which version of the model and which prompt caused the issue.

| Feature | Traditional Static Control | Modern Dynamic Control |

|---|---|---|

| Validation Frequency | Periodic (e.g., quarterly audits) | Continuous (real-time monitoring) |

| Guardrails | Fixed thresholds | Dynamic constraints based on context |

| Oversight | Post-deployment review | Human-in-the-loop for high-risk actions |

| Response to Drift | Manual retraining cycles | Automated anomaly detection and alerting |

Defining Clear Escalation Paths

Even with the best controls, things will go wrong. The difference between a minor incident and a crisis is your escalation plan. You need predefined triggers and actions.

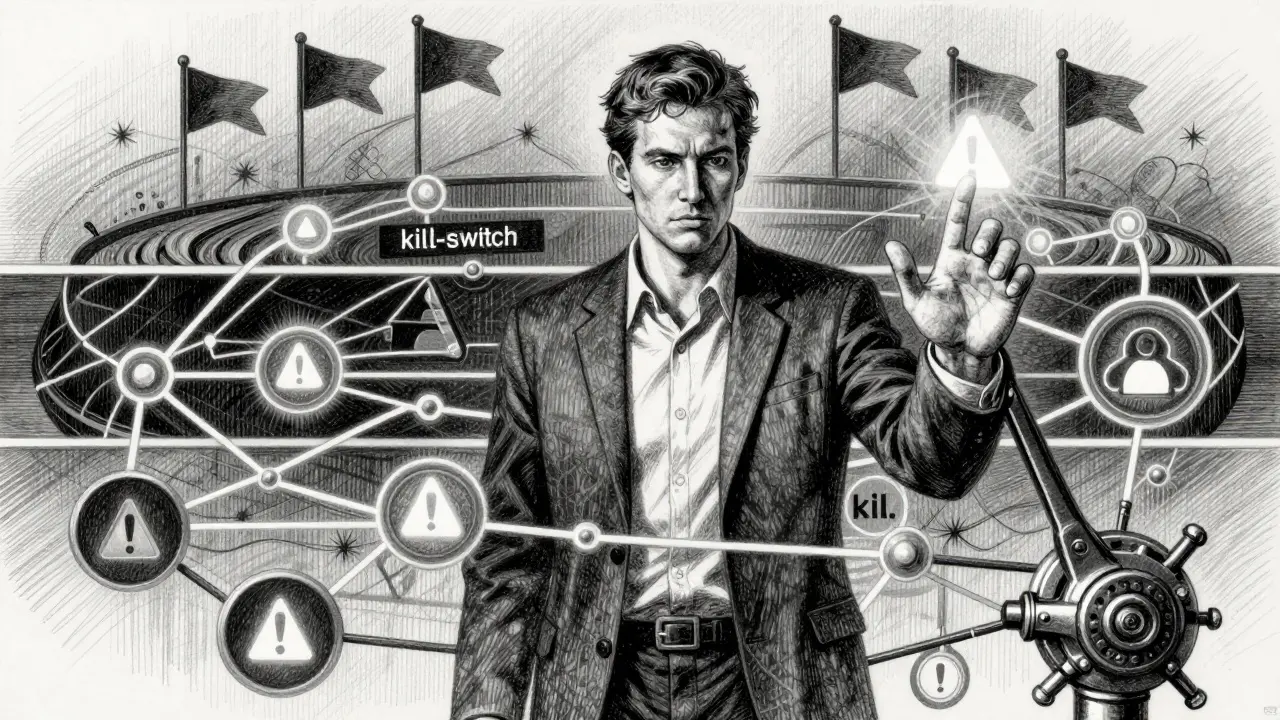

Kill-Switches

A Kill-Switch is an automated mechanism that halts agent actions when clearly defined unintended actions occur. For example, if an agent attempts to access a restricted directory or generates content flagged as highly toxic, the kill-switch stops the process instantly. This prevents further damage while humans investigate.

Escalation Triggers

Define what constitutes an escalation. Is it a confidence score below 80%? Is it a user complaint? Is it a deviation from expected reasoning paths? Create a tiered response system:

- Level 1 (Automated): System flags low-confidence outputs and routes them to a secondary validation layer.

- Level 2 (Human Review): Outputs involving sensitive data or high-stakes decisions require manual approval before release.

- Level 3 (Emergency Halt): Triggered by severe anomalies or security breaches, resulting in immediate suspension of the model instance and notification of the security team.

Vendor Risk Management

If you use third-party LLMs, you inherit their risks. Mitigate this by fixing models to approved versions and maintaining fallback models. If a vendor updates their API unexpectedly and breaks your compliance checks, you need a backup. Plug data classification systems directly into your RAG and prompt-routing components. Enforce access controls at the prompt, model, and output layers, not just at the application level.

Practical Implementation Steps

Start small and scale carefully. Do not deploy an LLM across your entire organization overnight. Follow this phased approach:

- Pilot Phase: Deploy in a controlled environment with non-sensitive data. Monitor closely for hallucinations and bias.

- Control Integration: Implement input/output filtering and logging. Test your kill-switches and escalation triggers.

- Governance Alignment: Map your AI controls to existing ISO or NIST standards. Document every decision.

- Scale with Oversight: Gradually expand usage, keeping HITL processes for high-risk areas.

Risk management for LLMs is not a one-time project. It is an ongoing discipline. As models evolve, so do the threats. Stay proactive, keep your controls dynamic, and never underestimate the value of human judgment in the loop.

What is the biggest risk associated with LLMs?

The biggest risk is unpredictability. Unlike traditional software, LLMs produce stochastic outputs, meaning they can generate unexpected, biased, or harmful content even with consistent inputs. This includes hallucinations (false information), data leakage, and susceptibility to adversarial attacks.

How do I implement a kill-switch for an AI agent?

A kill-switch is implemented by defining specific conditions that trigger an automatic halt. These conditions might include accessing restricted resources, generating content with high toxicity scores, or exceeding error thresholds. The system monitors these metrics in real-time and executes a stop command when triggered.

Why is continuous monitoring necessary for LLMs?

LLMs can experience "drift," where their performance degrades or their behavior changes over time due to new data or usage patterns. Continuous monitoring detects these anomalies early, allowing teams to intervene before minor issues become major compliance or security failures.

What is Human-in-the-Loop (HITL) governance?

HITL governance ensures that human experts review and approve AI outputs for high-stakes decisions. This reduces the risk of automated errors in critical areas like finance, healthcare, or legal compliance, providing a safety net that purely automated systems lack.

How does data minimization improve AI security?

Data minimization involves feeding the LLM only the data strictly necessary for its task. By removing sensitive or irrelevant information from the training and retrieval context, you reduce the risk of data leakage and limit the potential damage if the model is compromised.