You spend weeks curating high-quality data and tuning hyperparameters to make your large language model excel at a specific task. The results look great on paper. Then you deploy it, and suddenly the model starts generating toxic content or falling for simple jailbreak prompts. This isn't just bad luck; it is a known phenomenon in machine learning called alignment tax. Standard fine-tuning often strips away the safety guardrails that researchers spent months building into the base model.

The core problem is mathematical. When you update a model’s weights to improve performance on a new task, those updates can conflict with the weights responsible for ethical behavior. Research shows that benign fine-tuning can nearly quadruple a model's attack success rate (ASR), pushing it from a safe 11.6% to a dangerous 44.1%. Meanwhile, models that undergo specialized safety-preserving techniques keep their ASR below 2%. If you are deploying an AI assistant in healthcare, finance, or customer service, this difference is not just a metric-it is a liability risk.

Why Standard Fine-Tuning Breaks Safety

To fix the problem, you first need to understand why standard methods fail. Most developers use Supervised Fine-Tuning (SFT) or Reinforcement Learning from Human Feedback (RLHF) to adapt models. These methods apply gradient-based optimization across all parameters. The issue is that safety alignment is not evenly distributed throughout the neural network. It is fragile and localized.

When you train a model on a new dataset, the optimizer adjusts weights to minimize loss for that specific task. Often, these adjustments drag the model out of its "safety basin"-the region in parameter space where the model behaves responsibly. Traditional alignment techniques produce superficial safety properties. They teach the model to refuse harmful requests based on surface-level patterns rather than deep understanding. Once you tweak the underlying parameters for a new job, those patterns break.

This creates a tension between capability and safety. You want the model to be helpful and accurate, but you also need it to remain harmless. Without intervention, improving one usually degrades the other. Understanding this trade-off is the first step toward building robust systems.

Gradient Surgery: The SafeGrad Approach

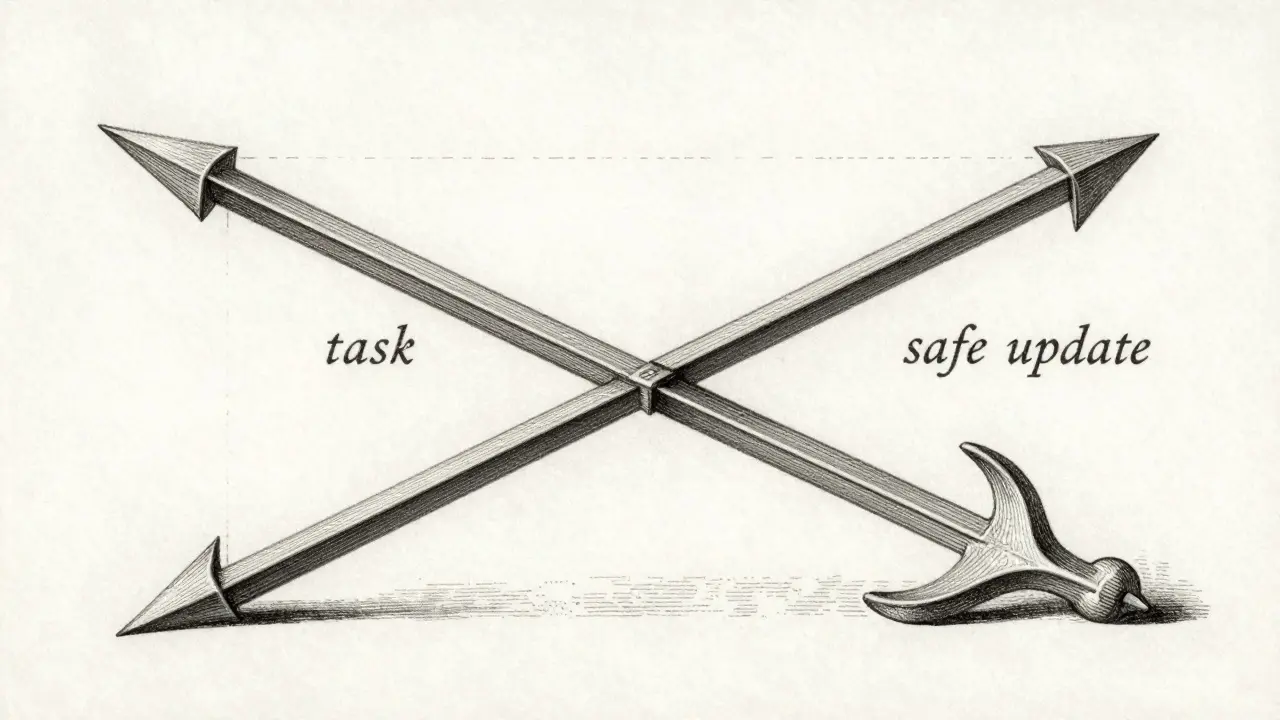

One of the most effective ways to preserve safety is through gradient surgery, specifically a method known as SafeGrad. This technique operates directly on the gradients-the direction and magnitude of weight updates-during training.

Imagine two vectors: one representing the ideal update for your task (g_task) and another representing the direction that preserves safety (g_safety). In many cases, these vectors point in opposite directions. SafeGrad calculates the dot product between them. If they conflict (a negative dot product), the algorithm projects the task gradient orthogonal to the safety gradient. Essentially, it removes the part of the update that harms safety while keeping the part that improves performance.

The formula looks like this:

g_safe = g_task - (g_task · g_safety / ||g_safety||²) * g_safety

In practice, this means you get 85-90% of the task performance improvement while retaining 92-95% of the original safety alignment. It is a surgical approach that prevents the model from drifting into unsafe territory without sacrificing too much utility. For teams with limited computational resources, SafeGrad offers a high return on investment because it requires no additional model architecture changes.

Layer Freezing and Selective Fine-Tuning

Not all layers in a transformer model contribute equally to safety. Research indicates that safety alignment concentrates heavily in the middle layers of the network. In a typical 40-layer model, layers 15 through 25 hold critical safety information. Early layers handle input tokenization and basic syntax, while late layers focus on output generation and style.

By freezing these middle layers during fine-tuning, you protect the core ethical reasoning mechanisms of the model. You then fine-tune only the early and late layers, or add small trainable adapter modules like LoRA (Low-Rank Adaptation) that do not modify the frozen weights. This strategy exploits the fact that task-specific knowledge often resides in different parts of the network than general safety principles.

Implementing layer freezing involves three steps:

- Identify critical safety layers through ablation studies or using pre-mapped indices from open-source research.

- Freeze these layers by setting their

requires_gradattribute to False in PyTorch or equivalent frameworks. - Fine-tune the remaining layers with normal optimization settings.

This approach yields significant performance-safety trade-offs. While it may slightly reduce peak performance compared to full fine-tuning, it drastically reduces the risk of catastrophic failure. It is particularly useful for high-stakes applications where safety cannot be compromised.

Safety-Aware Probing and Dynamic Shaping

For more complex scenarios, you might need architectural interventions that monitor gradient flow in real-time. Safety-Aware Probing (SAP) adds safety probes during gradient propagation to prevent optimization toward harmful directions. Think of SAP as a watchdog that checks every parameter update against a safety benchmark before allowing it to proceed.

A related concept is Dynamic Safety Shaping (DSS). This framework uses fine-grained safety signals to reinforce learning from safe response segments while suppressing unsafe content. Instead of evaluating the entire response at once, DSS repurposes guardrail models to evaluate partial responses segment by segment. This allows the system to track how safety risk evolves throughout generation, providing more precise feedback to the optimizer.

These methods are computationally heavier than gradient surgery or layer freezing, but they offer finer control over the alignment process. They are ideal for organizations building custom AI agents that must operate in highly regulated environments.

Continuous Monitoring and Rollback Strategies

No single technique guarantees perfect safety. Continuous monitoring is essential because safety can degrade subtly over training epochs. You should evaluate safety at regular intervals-every N training steps-using a benchmark safety test suite.

Here is a practical workflow for monitoring:

- Define a baseline safety score using a standard dataset like TruthfulQA or a custom red-teaming set.

- During training, run inference on this subset every 100-500 steps.

- If the safety score drops below 95% of the baseline, trigger an automatic rollback to the last safe checkpoint.

- Alternatively, reduce the learning rate to slow down potentially harmful updates.

This proactive approach ensures that you catch alignment drift before it becomes irreversible. It also provides valuable data for debugging. By analyzing which examples caused the drop, you can refine your training data or adjust your safety constraints.

Choosing the Right Strategy for Your Use Case

The best approach depends on your risk tolerance and computational budget. Here is a quick guide to help you decide:

| Technique | Complexity | Safety Retention | Best For |

|---|---|---|---|

| SafeGrad | Low | High (92-95%) | General purpose, low resource |

| Layer Freezing | Medium | Very High | High-risk apps (healthcare, legal) |

| SAP/DSS | High | Customizable | Research, complex agents |

| LoX/NLSR | High | Near Original | Post-hoc restoration |

For moderate-risk applications like customer service bots, combining Safety-Aware Probing with regularization often strikes the right balance. For experimental projects, starting with layer freezing is a safe bet. Always remember that system prompts play a crucial role too. Using consistent prompt templates during both fine-tuning and inference helps anchor the model within its safety basin.

Does fine-tuning always make LLMs less safe?

Standard fine-tuning often degrades safety because it updates all model parameters, including those responsible for ethical alignment. However, using techniques like SafeGrad or layer freezing can preserve safety while still improving task performance.

What is SafeGrad and how does it work?

SafeGrad is a gradient surgery method that identifies conflicts between task-improving gradients and safety-preserving gradients. It modifies the task gradient to remove components that harm safety, allowing the model to learn new skills without compromising its ethical guardrails.

Which layers should I freeze to maintain safety?

In most transformer models, safety alignment is concentrated in the middle layers. For a 40-layer model, this typically includes layers 15 through 25. Freezing these layers protects the core reasoning mechanisms while allowing other parts of the model to adapt to new tasks.

How often should I monitor safety during training?

You should evaluate safety metrics every 100-500 training steps, depending on your dataset size and compute resources. Set up automatic rollbacks if safety scores drop below 95% of the baseline to prevent irreversible alignment drift.

Can I restore safety after fine-tuning has already degraded it?

Yes, post-hoc methods like Low-rank safety subspace amplification (LoX) or Neuron-level safety realignment (NLSR) can restore safety by projecting the model back into its original safety subspace or transplanting critical safety neurons from a reference model.