Imagine building a beautiful, modern dashboard in seconds using a generative AI tool. It looks sleek, the layout is perfect, and the code is clean. But for a user who relies on a screen reader or navigates exclusively with a keyboard, that same interface might be a complete dead end. When AI generates a button that looks like a button but lacks the underlying code to tell a screen reader it's actually a button, we've created a digital wall.

The promise of AI is to democratize design, but if we aren't careful, we're just automating the exclusion of millions of people. The real challenge isn't getting AI to write HTML; it's getting AI to understand the nuanced requirements of WCAG 2.1 is the Web Content Accessibility Guidelines, a set of international standards that ensure digital content is accessible to people with disabilities. These guidelines are the gold standard for ensuring that "Operable" interfaces allow anyone, regardless of their input method, to navigate a site effectively.

The Basics of Accessible AI Components

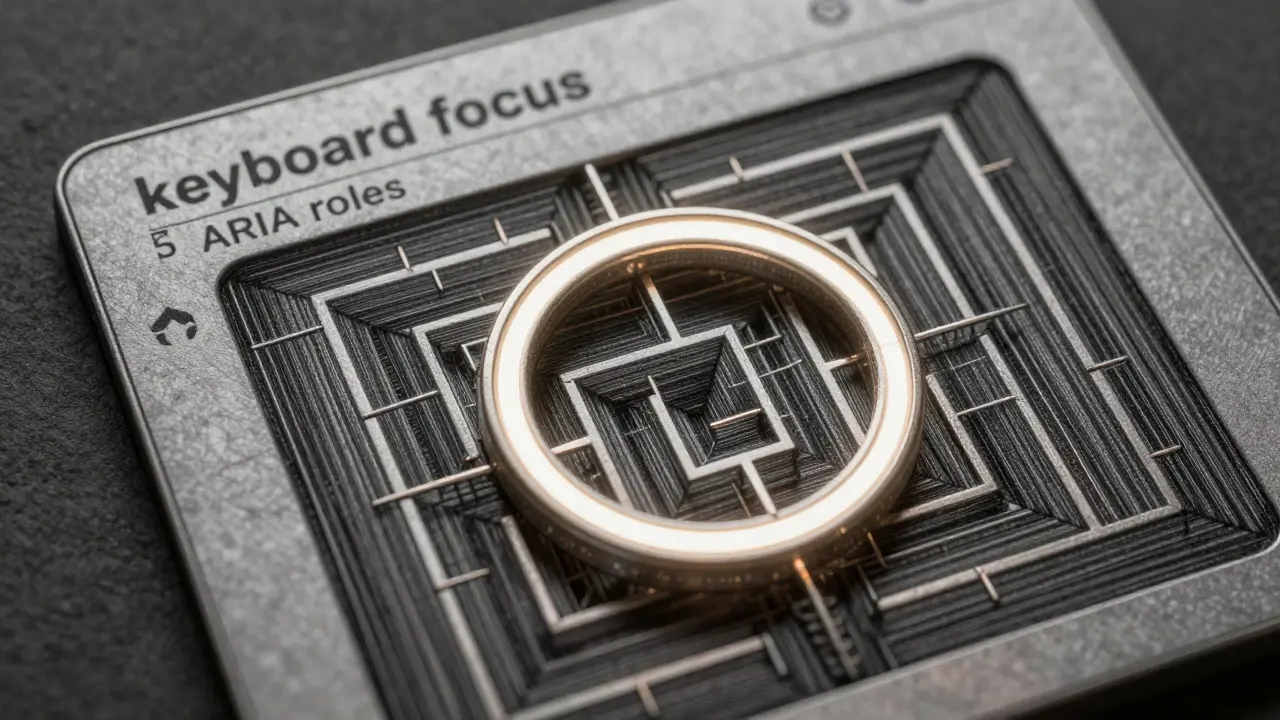

For an AI-generated component to be truly accessible, it can't just "look" right. It needs a foundation of semantic HTML and a layer of communication for assistive technologies. This usually involves three core pillars: keyboard focus, screen reader compatibility, and structural logic.

First, there is the matter of

ARIA (Accessible Rich Internet Applications). ARIA is a set of attributes added to HTML elements that provide extra information to screen readers when standard HTML tags aren't enough. For example, if an AI generates a custom div-based dropdown, it must add role="combobox" and aria-expanded="false" so a blind user knows what the element does and whether it's open.

Then there is focus management. Have you ever pressed the Tab key on a website and felt like your cursor disappeared into a void? That's a failure in focus order. AI tools often struggle with this, especially in dynamic content like modals. A properly generated component must move the keyboard focus into a modal when it opens and trap it there until the user closes the window, preventing the "keyboard traps" that Dr. Sarah Horton notes still appear in about 22% of AI implementations.

Comparing AI Accessibility Tools

Not all AI tools handle these requirements the same way. Some act as "accessibility assistants" that fix existing code, while others try to build accessible components from the ground up. Depending on whether you are a designer moving toward code or a developer refining a product, your choice of tool will change.

| Tool | Primary Approach | Key Strength | Typical Price |

|---|---|---|---|

| UXPin AI Component Creator | Design-to-Code | Generates React components with semantic HTML | $19/user/month |

| Workik AI Generator | Code Optimization | Fixes ARIA labels and identifies WCAG violations | Free / $29 month |

| AI SDK (v2.3) | Framework-First | Built-in keyboard navigation primitives | Variable |

| Aqua-Cloud | Automated Testing | Enterprise-scale keyboard issue detection | $499/month |

The Gap Between Automated Code and Human Experience

There is a dangerous temptation to believe that if an AI tool says a component is "WCAG compliant," it actually is. But automated tools only catch a fraction of the problems. A study by Deque found that automation only catches about 30% of screen reader issues. Why? Because AI can check if an image has an alt tag, but it can't always tell if the description of that image actually makes sense in the context of the page.

Take React Aria for example. An open-source library by Adobe that provides unstyled accessibility primitives for building design systems. It's incredibly powerful because it handles the complex keyboard logic-like arrow key navigation in a menu-without the AI having to "guess" the behavior. When developers pair AI generation with libraries like React Aria, they often see a 25-40% reduction in implementation time while maintaining higher quality.

We also see a disparity in accuracy. Research from Carnegie Mellon University shows that while AI tools are decent at keyboard accessibility (78% compliance), they plummet to 52% when it comes to complex screen reader interactions. This suggests that while the "mechanical" part of accessibility (tabs and enters) is getting easier for AI, the "communicative" part (meaningful ARIA descriptions) still requires a human touch.

Practical Workflow for Implementing AI UI

If you're integrating AI-generated components into a professional project, you can't just copy-paste. You need a validation pipeline. Expert teams generally allocate about 15-20% of their sprint time specifically for accessibility validation.

Here is a reliable sequence for deploying these components:

- Define Design Tokens: Set your base font size (min 16px) and touch targets (min 44x44 pixels) before generating code. This ensures motor accessibility is baked in.

- Generate Semantic Base: Use tools like UXPin or Workik to create the initial HTML structure. Ensure the AI uses

<button>instead of<div>wherever possible. - Apply ARIA Overlays: Manually review the generated labels. If the AI labeled a complex chart as "Chart 1," rewrite it to describe the actual trend of the data.

- Keyboard Stress Test: Tab through the entire component. Does the focus move logically? Does it get stuck in a loop?

- Screen Reader Validation: Run the component through NVDA or JAWS. If the screen reader announces "clickable" but doesn't say what happens when you click, the component fails.

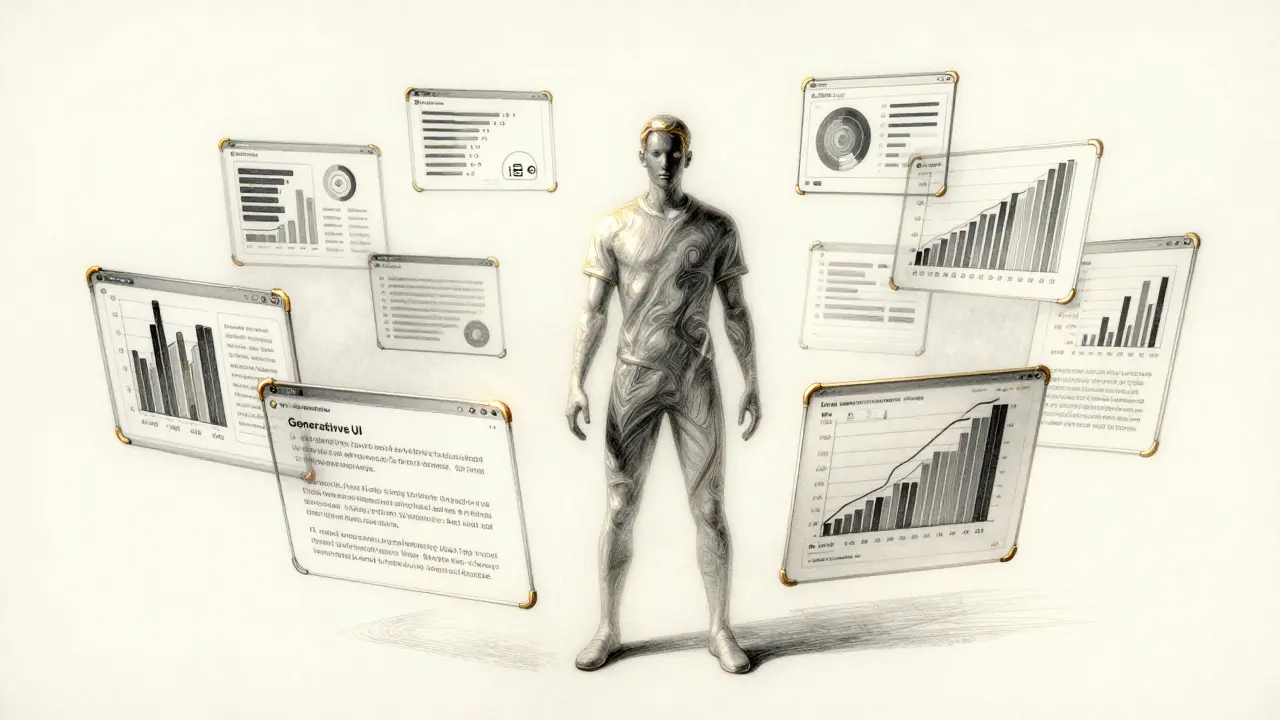

The Future: From Compliance to Personalization

We are moving away from a world where we build one "accessible" version of a site for everyone. Jakob Nielsen has argued that we should be moving toward Generative UI-where the AI doesn't just follow a checklist, but actually generates a unique interface for every single user based on their specific needs.

Imagine a site that detects a user has severe motor impairment and automatically increases touch targets and simplifies keyboard shortcuts in real-time. Or a site that rewrites complex data visualizations into a narrative list for a screen reader user on the fly. This is the shift from compliance (avoiding a lawsuit) to personalization (actually helping the user).

However, this future comes with risks. The US Department of Justice recently dealt with a settlement where an organization's AI-generated content failed Section 508 requirements despite passing automated tests. The lesson is clear: AI is a powerful drafting tool, but it is not a certified accessibility auditor.

Can AI completely replace manual accessibility testing?

No. While AI can identify common errors and generate base code, it cannot replace human judgment for complex interactions. Automated tools typically catch only about 30% of screen reader issues, and human testers are needed to ensure that the user experience is actually intuitive, not just technically compliant.

What is a keyboard trap in AI-generated UI?

A keyboard trap occurs when a user can move focus into a component (like a modal or a date picker) using the Tab key but cannot move it back out or close the element using the keyboard. This effectively "traps" the user and prevents them from accessing the rest of the page.

Which screen readers should I use to test AI components?

The most common industry standards are NVDA (free and widely used on Windows), JAWS (the enterprise standard for Windows), and VoiceOver (built into macOS and iOS). Testing across at least two of these ensures a broader range of compatibility.

What are ARIA roles and why do they matter for AI?

ARIA roles tell assistive technology what a specific element is (e.g., a tab, a slider, or an alert). Because AI often generates custom visual elements using generic tags like <div>, ARIA roles are essential to communicate the purpose of those elements to users who cannot see them.

How does WCAG 2.2 impact AI-generated components?

WCAG 2.2 introduced new criteria focusing on drag-and-drop interactions and focus appearance. For AI-generated UI, this means the AI must not only provide a keyboard alternative to dragging but also ensure that the focus indicator is clearly visible and high-contrast against the background.