Imagine you're using an AI medical assistant to check drug interactions. The AI gives you a confident answer, but it completely ignores a critical warning found in the medical journal it just retrieved. Or worse, it hallucinates a side effect that doesn't exist. In these high-stakes scenarios, a "pretty good" response isn't enough. We need a way to prove that the AI is actually telling the truth and staying loyal to its sources. This is where Retrieval-Augmented Generation is a technique that gives Large Language Models (LLMs) access to external, verifiable data to reduce hallucinations and improve accuracy. Also known as RAG, it transforms the LLM from a closed-box predictor into a system that can "look up" information before speaking.

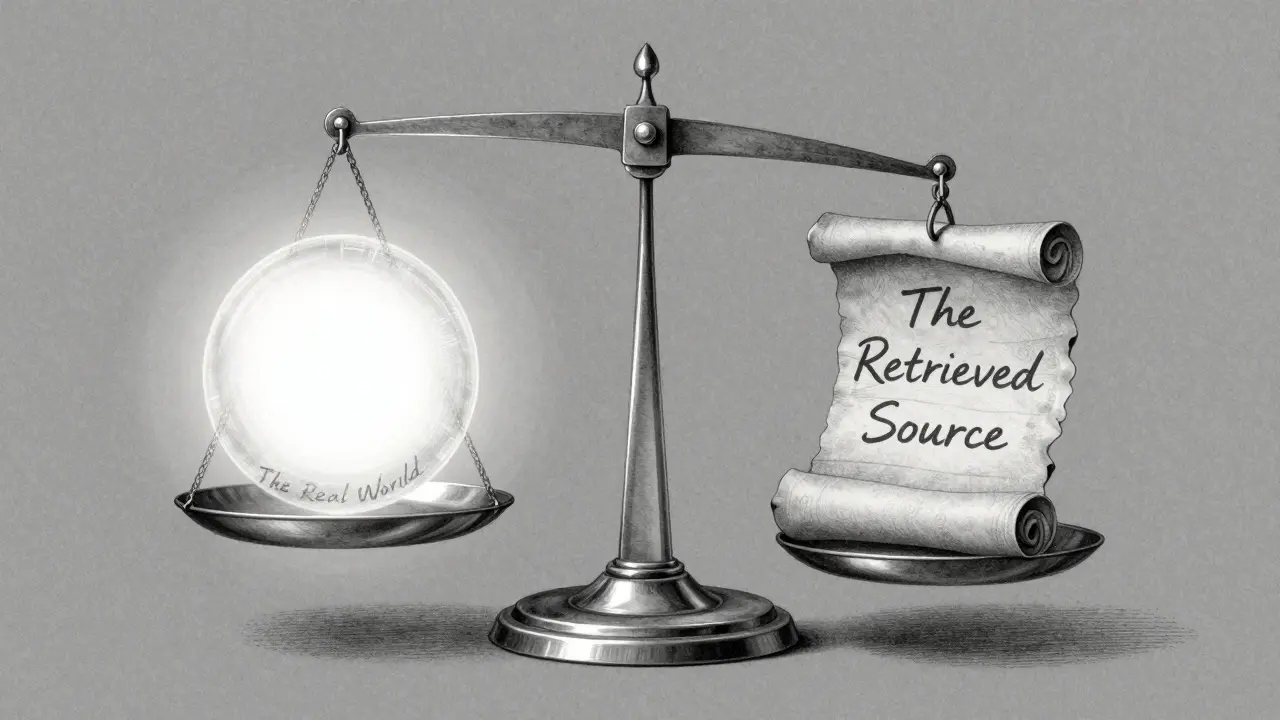

But how do we actually measure if a RAG system is working? Most people confuse factuality with faithfulness, but they are two very different beasts. If your AI quotes a source perfectly but that source is a prank website, the AI is being faithful, but it isn't being factual. Conversely, if the AI knows a fact from its own training data but ignores the provided document, it's factual, but not faithful. To build a reliable system, you need to track both.

The Core Difference: Factuality vs. Faithfulness

To get your evaluation right, you first have to stop using these terms interchangeably. Factuality refers to whether a statement matches the real world. If the AI says "The capital of France is Paris," it's factual. Faithfulness is about the relationship between the output and the retrieved context. If the retrieved document says "The capital of France is Lyon" and the AI repeats that, the AI is being faithful to the context, even though it's factually wrong.

This distinction is a lifesaver when debugging. If your system has low faithfulness, your generator is hallucinating. If it has low factuality but high faithfulness, your retrieval system is feeding it garbage. You can't fix a retrieval problem by tweaking your prompt; you have to fix your data source or your embedding model.

| Dimension | Key Question | Failure Mode | Primary Fix |

|---|---|---|---|

| Factuality | Is this true in the real world? | Hallucinations / Outdated data | Better Knowledge Base / SFT |

| Faithfulness | Does it follow the provided text? | "Creative" interpretations | Prompt Engineering / Temperature drop |

| Sufficiency | Was enough info retrieved? | Incomplete answers | Increase Recall@k / Better Chunking |

The RAGAS Framework and Technical Metrics

One of the most popular ways to quantify these concepts today is through RAGAS, which is a specialized assessment suite designed to evaluate RAG pipelines without requiring human-annotated ground truth labels. Instead of comparing an AI answer to a "gold" human answer, RAGAS uses the retrieved context and the generated answer to calculate scores.

When you're digging into the technicals, focus on these two metrics: context precision and context recall. Context precision tells you how much of the retrieved noise is actually useful. It's calculated by taking the number of relevant evidence pieces used and dividing it by the total evidence retrieved. If you retrieve ten documents but only two are actually relevant, your precision is low, and you're essentially forcing the LLM to sift through a lot of junk.

Context recall, on the other hand, asks: "Did we actually find everything we needed?" You divide the relevant evidence used by the total relevant evidence available in the entire dataset. If the answer to a question requires three specific facts and your retriever only finds one, your recall is low, and the AI will either guess the rest or tell you it doesn't know.

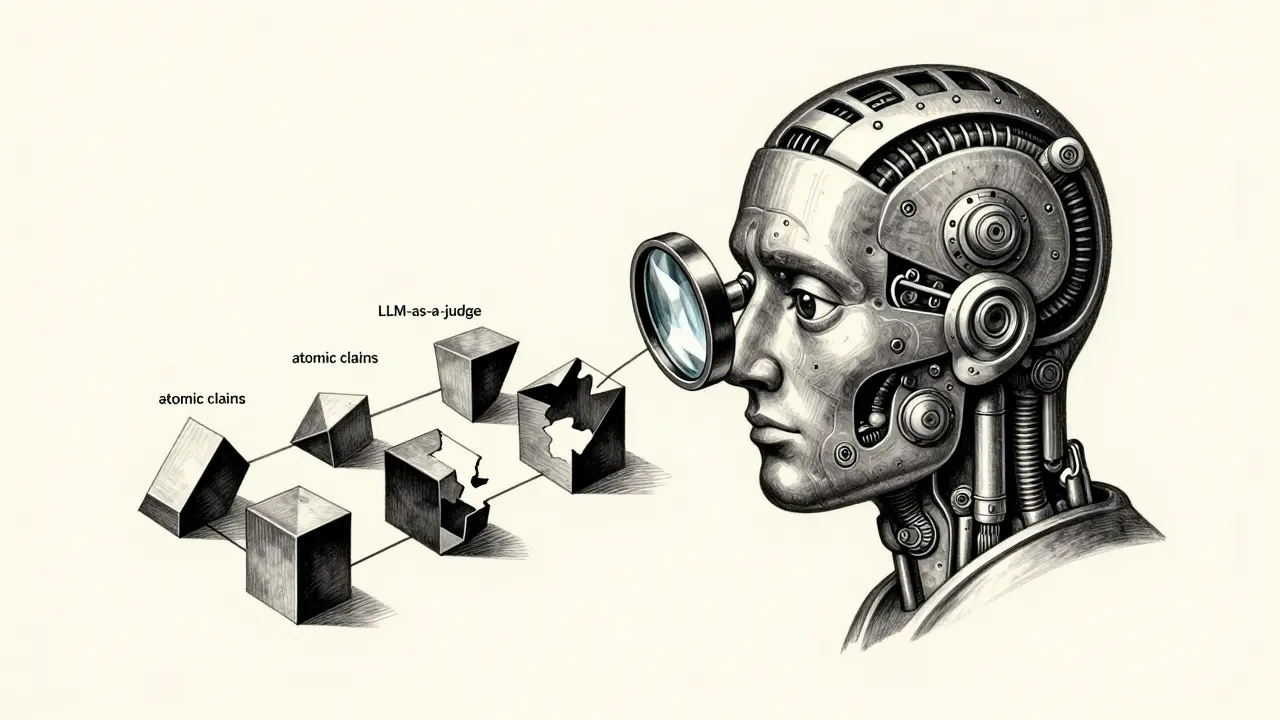

Using LLM-as-a-Judge for Faithfulness

Traditional NLP metrics like BLEU or ROUGE are almost useless for RAG. They just check if words overlap. If the AI says "The medication is safe" and the gold answer is "The drug is not dangerous," BLEU might give it a low score because the words are different, even though the meaning is identical. That's why the industry has shifted toward the LLM-as-a-judge approach, where a more powerful model (like GPT-4) evaluates the output of a smaller one.

A practical way to do this is by using a strict prompt template. For example, companies using Evidently AI often ask the judge model: "Is the answer faithful to the retrieved context, or does it add unsupported information, omit important details, or contradict the source?" The judge is forced to return a binary "faithful" or "not faithful." This removes the ambiguity and gives you a hard percentage of faithfulness across thousands of queries.

However, don't trust the judge blindly. Research shows that even high-end verifiers can struggle. Some studies have seen F1 scores as low as 0.63 when identifying false claims, meaning the "judge" is wrong nearly 40% of the time. To combat this, you should implement granular claim verification. Instead of judging the whole paragraph, break the response into "atomic claims"-single, simple statements-and verify each one individually.

Real-World Benchmarks: Testing Your System

To know if your RAG system is actually ready for production, you need to run it against recognized datasets. Depending on what you're building, different benchmarks will be more useful:

- TruthfulQA: Great for testing if your model resists common misconceptions or "false beliefs." It contains about 817 questions specifically designed to trick LLMs.

- HotpotQA: Use this if your AI needs to perform multi-step reasoning. It requires the model to find pieces of information from multiple documents to arrive at a single answer.

- StrategyQA: Ideal for testing strategic thinking, as it involves questions that don't have a direct answer in a single text but require a logical leap.

- MMLU: The gold standard for general knowledge across thousands of categories, helping you see if your model's base knowledge is complementing the RAG process.

If you're working in a specialized field, like healthcare or finance, generic benchmarks won't cut it. You'll likely need to build a custom dataset. In these industries, 85% of deployments use strict faithfulness metrics because a mistake isn't just a bad user experience-it's a regulatory violation or a safety risk.

Common Pitfalls and Trade-offs

As you implement these metrics, you'll hit a few classic walls. The biggest one is the tension between recall and precision. If you increase your k value (retrieving more documents), you'll likely improve your context recall. You're more likely to find the needle in the haystack. But there's a catch: too many documents introduce noise. The LLM can get distracted by irrelevant information, which actually tanks your answer precision and increases the chance of hallucinations.

Another trap is the "snowballing hallucination." This happens when a model makes one small factual error early in a response, and then spends the rest of the paragraph building a logical argument to support that error. If you only evaluate the final answer, you might miss the point where it first went off the rails. This is why the FactScore method-breaking responses into atomic claims-is so critical.

Lastly, be aware of the cost. Advanced frameworks like SAFE (Search-Augmented Factuality Evaluator) dynamically retrieve evidence during the evaluation process. This means for every single query you test, you're making multiple extra API calls to a search engine and an LLM judge. For a large test set, this can blow through your budget quickly.

What is the difference between context relevance and context sufficiency?

Context relevance (precision) measures how much of the retrieved information is actually useful for answering the query-essentially, how much "noise" is in your results. Context sufficiency (recall) measures whether the retrieved information contains all the necessary pieces of evidence required to answer the question completely. You can have high relevance but low sufficiency if you only retrieve one perfect sentence but miss the other three needed for a full answer.

Why can't I just use ROUGE or BLEU for RAG?

ROUGE and BLEU measure n-gram overlap, meaning they check if the AI used the exact same words as a reference answer. In RAG, the AI might provide a perfectly factual and faithful answer using different wording than the reference. These metrics penalize correct answers that are paraphrased and reward incorrect answers that happen to use the same keywords, making them unreliable for grounding.

How do I stop my RAG model from adding unsupported info?

The best approach is a combination of three things: first, set your temperature to 0 to reduce creativity. Second, use a system prompt that explicitly tells the model to say "I don't know" if the answer isn't in the retrieved text. Third, implement a guardrail using an LLM-as-a-judge to flag responses that contain claims not supported by the context.

What is the 'LLM-as-a-judge' approach?

This is an evaluation method where a highly capable model (like GPT-4o or Claude 3.5) is given the prompt, the retrieved context, and the generated answer. It is then asked to act as a grader, scoring the answer based on specific criteria like faithfulness or relevance. It's faster than human review and more flexible than hard-coded string matching.

Can fine-tuning improve RAG factuality?

Yes, domain-specific supervised fine-tuning (SFT) with knowledge injection can help. In some medical and legal domains, this has led to 15-22% accuracy improvements. However, fine-tuning only improves the model's internal knowledge; it doesn't fix a broken retrieval pipeline. You still need RAG and faithfulness metrics to ensure the model is using the most current data.

Next Steps for Implementation

If you're just starting, don't try to build a full SAFE-style pipeline on day one. Start with the basics: implement context relevance and answer relevance. These are the easiest to track and give you immediate feedback on whether your retriever is the problem or your generator is.

Once those are stable, move toward a faithfulness check using a prompt-based judge. If you see high error rates in specific categories-like time-sensitive queries-consider adding a metadata filter to your retrieval to ensure you're only pulling the most recent documents. Finally, for any application that could impact a user's health or finances, you must implement a granular, claim-by-claim verification process to catch those elusive "snowballing" hallucinations.