To fix this, you need RAG privacy controls is a security framework designed to restrict sensitive data access and mask private information before it ever reaches a Large Language Model. Without these, you aren't just building a tool; you're building a massive data leakage vector. In fact, the Cloud Security Alliance found that 78% of organizations using RAG during their proof-of-concept phase hit at least one data leak because they lacked these specific controls.

The Danger of the "Flat" Vector Store

In a basic RAG setup, the process is simple: a user asks a question, the system finds the most mathematically similar chunks in a vector database, and those chunks are sent to the LLM to generate an answer. The problem? Vector databases aren't naturally "aware" of who is asking the question. If the data is there, the retriever finds it. This effectively gives every user root-access to the entire dataset.

This vulnerability is exacerbated by the fact that many popular vector stores don't have built-in enterprise security. For instance, developers frequently report on platforms like Reddit and GitHub that implementing Row-Level Security (RLS)-which ensures a user only sees the rows they are authorized to see-is a manual, grueling process. When you rely solely on the LLM to "behave" and not reveal secrets, you're gambling with your company's compliance and customer trust.

Implementing Row-Level Security via Metadata Filtering

The most practical way to stop unauthorized data access is by using metadata filtering. Instead of just storing the text embedding, you attach an authorization tag to every chunk of data. This could be a department ID, a security clearance level, or a specific project code.

When a query comes in, the system doesn't just search for "relevant" text; it applies a hard filter to the query. For example, if a user from the Finance department asks a question, the system automatically appends a filter: WHERE department == 'Finance'. This ensures the retriever only looks at Finance-related vectors. Databricks has demonstrated this approach to achieve 100% data isolation between departments, ensuring that HR personnel can't accidentally retrieve Finance data and vice versa.

| Strategy | Implementation Effort | Latency Impact | Security Level |

|---|---|---|---|

| Metadata Filtering | Moderate (30-40 hrs) | Low (2-5%) | Medium |

| Policy-Based (e.g., Cerbos) | Moderate (2-3 week curve) | Low | High |

| Context-Based (CBAC) | High (Enterprise setup) | Moderate | Very High |

| Custom Lambda Filters | High (Manual coding) | High (15-25%) | Medium/High |

While metadata filtering is efficient, it isn't bulletproof. Sophisticated prompt injection attacks can sometimes manipulate the system into ignoring these filters. This is why security experts suggest moving beyond simple filtering toward a dedicated authorization layer. Tools like Cerbos is an open-source authorization service that allows teams to decouple access policies from their application code can reduce unauthorized exposure by up to 99.8% by applying a strict "query plan" before the vector store is even touched.

Redaction: The Last Line of Defense

Even with perfect row-level security, you might have a document that is legally accessible to a user but contains sensitive PII (Personally Identifiable Information) that shouldn't be sent to a third-party LLM provider. This is where redaction comes in. Redaction happens after retrieval but before the LLM receives the prompt.

The goal is to strip out names, social security numbers, or credit card details. Using frameworks like Microsoft Presidio or spaCy, you can implement Named Entity Recognition (NER) to automatically detect and mask sensitive entities. These tools can reach 95-98% accuracy in identifying PII. If the retriever pulls a paragraph about a client, the redaction layer swaps "John Doe" for "[PERSON_1]" and "555-0123" for "[PHONE_NUMBER]".

This "pre-LLM" scrub is vital because once data enters the LLM prompt, you lose control over it. If you are using a managed API, you are essentially handing your retrieved data to another company. Redaction ensures that even if the LLM is compromised or logs the prompt, no actual private data is leaked.

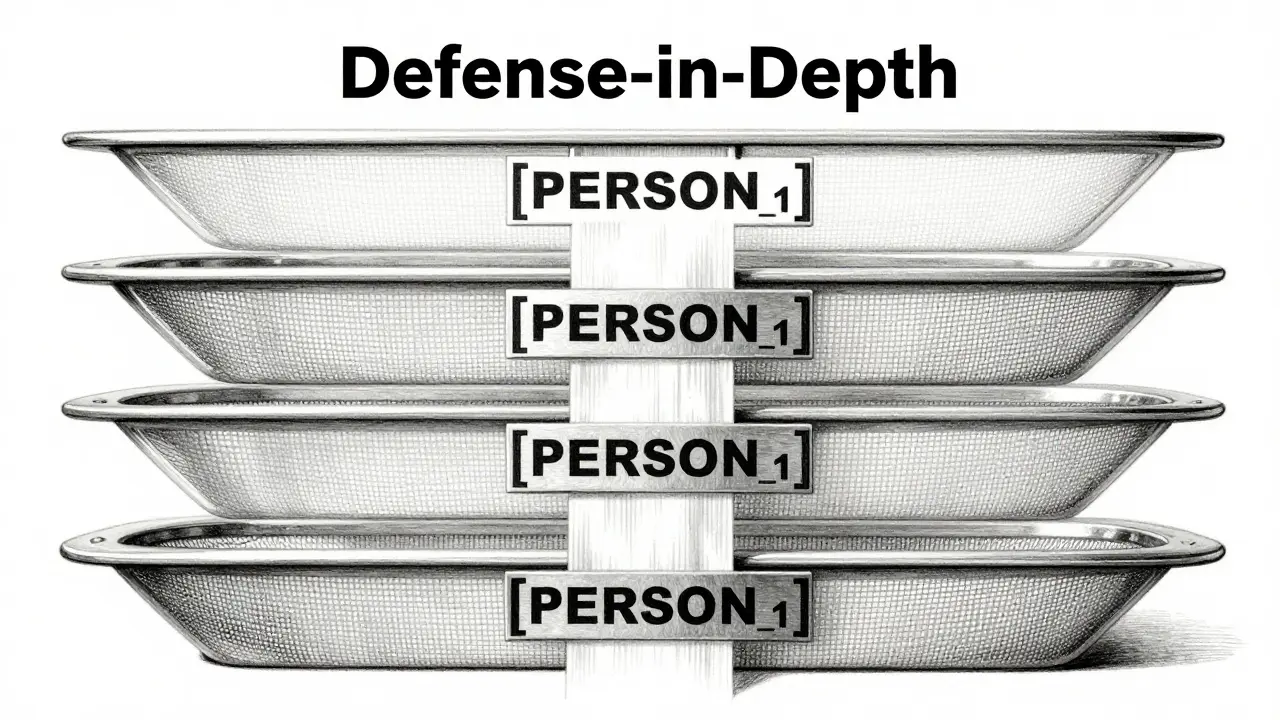

Building a Defense-in-Depth Architecture

If you want a truly secure RAG pipeline, you can't rely on a single trick. The Cloud Security Alliance recommends a four-layer "defense-in-depth" strategy to cover all bases:

- Layer 1: Anonymization. Mask PII before the data is even embedded into the vector database.

- Layer 2: Metadata Access Control. Use RLS or metadata filters to ensure users only retrieve chunks they are allowed to see.

- Layer 3: Query Validation. Scan the user's question for prompt injection attempts that try to bypass security filters.

- Layer 4: Output Filtering. Check the LLM's final response for any sensitive patterns that might have leaked through.

For organizations in highly regulated sectors, you might even consider Homomorphic Encryption. This allows the system to perform semantic searches on encrypted data without ever decrypting it. While it was previously too slow for real-world use, recent benchmarks show it can now be done with only a 12-18% performance overhead, making it a viable option for extreme privacy requirements.

Common Implementation Pitfalls

The biggest trap in RAG security is assuming that your vector database is a secure vault. Most are designed for speed and similarity, not for complex permissioning. One of the most frequent failure points-reported in over 70% of post-implementation reviews-is incomplete metadata tagging. If 20% of your documents are missing the "department" tag, those documents effectively become a security blind spot that any user can find.

Another mistake is ignoring the latency trade-off. Adding a custom Lambda function to filter results in Amazon Bedrock, for instance, can increase query latency by 15-25%. You have to decide where your bottleneck is: are you okay with a slightly slower response if it means you won't get a GDPR fine? Given that GDPR fines for AI-related breaches jumped 220% in 2023, the answer for most enterprises is a resounding yes.

What is the difference between RBAC and Row-Level Security in RAG?

Role-Based Access Control (RBAC) typically manages who can access the application or a general category of data (e.g., "Managers can access the HR tool"). Row-Level Security (RLS) is more granular; it controls which specific pieces of data (rows) a user can see within that tool (e.g., "Manager A can only see the records of employees in their specific department"). In RAG, RLS is essential because a single vector query can pull fragments from across the entire database.

Does metadata filtering protect against prompt injection?

Not entirely. While metadata filtering prevents the retriever from fetching unauthorized chunks, a clever user might use prompt injection to trick the LLM into ignoring the context or attempting to "hallucinate" private data it might have seen during its original training. This is why query validation and output filtering are necessary complementary layers.

How much does redaction affect the quality of LLM answers?

If done correctly, the impact is minimal. LLMs are very good at understanding that "[PERSON_1]" refers to a specific individual. As long as the consistency of the masking is maintained (i.e., the same person is always [PERSON_1] within a single conversation), the LLM can still reason through the data and provide accurate answers without ever knowing the person's real name.

Which vector databases support native RLS?

Very few provide true, "out-of-the-box" enterprise RLS. Most developers use a combination of metadata filtering (supported by Pinecone, Weaviate, and Milvus) and an external authorization service like Cerbos or Oso to enforce the rules before the query is sent to the database.

What is the performance cost of implementing these controls?

It varies by method. Simple metadata filtering adds negligible latency (2-5%). However, adding custom validation layers or using secure enclaves (like Intel SGX) can increase overhead by 15-60%. The trade-off is usually worth it to prevent catastrophic data leaks.

Next Steps for Implementation

If you're starting from scratch, don't try to build a custom security engine. Start by auditing your data and defining a strict metadata schema. Spend a few days collaborating with your legal and security teams to determine exactly who should see what. Once your tags are defined, implement metadata filtering as your first line of defense.

For those already in production, run a "leakage test." Try to ask your AI questions that it shouldn't be able to answer-like asking about executive salaries or private client IDs. If it answers, you have a gap. Your next move should be integrating a redaction framework like Presidio to mask PII before it leaves your environment.