Imagine asking an AI to generate an image of a "CEO" or a "surgeon." For many users, the result is predictably similar: a middle-aged man in a professional setting. Now, imagine asking for a "criminal" or a "low-wage worker," and the AI consistently produces images of people with darker skin. This isn't a glitch in the code; it's a mirror of the data the AI was fed. When we talk about dataset bias in multimodal generative AI, we're talking about how these systems absorb our societal prejudices and spit them back at us as "truth."

The real danger here is that multimodal systems-which handle text, images, and audio all at once-don't just repeat these biases; they often amplify them. If a model learns that a certain word is linked to a certain image, it creates a feedback loop that reinforces stereotypes far more effectively than a simple text-based chatbot ever could. To build AI that actually serves everyone, we have to understand where these biases hide and how to scrub them out.

The Root of the Problem: Where Bias Comes From

Most of the heavy lifting in generative AI is done by Large Multimodal Models (LMMs). These systems are trained on massive scrapes of the open web-forums, stock photo libraries, and digitized books. The problem is that the internet isn't a neutral place. It's a reflection of who has had the most access to technology and who holds the most power in the digital economy. This creates a massive gap in representation.

Socioeconomic factors play a huge role here. If the majority of training data comes from Anglocentric sources, the AI learns that those perspectives are the "default." This leads to a systemic issue where the voices and cultures of the Global South are either ignored or viewed through a skewed lens. Furthermore, because these models require trillions of data points to work, developers often relax their filtering standards just to get enough volume. In the rush for scale, quality and fairness often take a backseat.

Three Ways AI Gets Representation Wrong

Not all bias looks the same. Depending on how the data is skewed, the AI will fail in one of three specific ways:

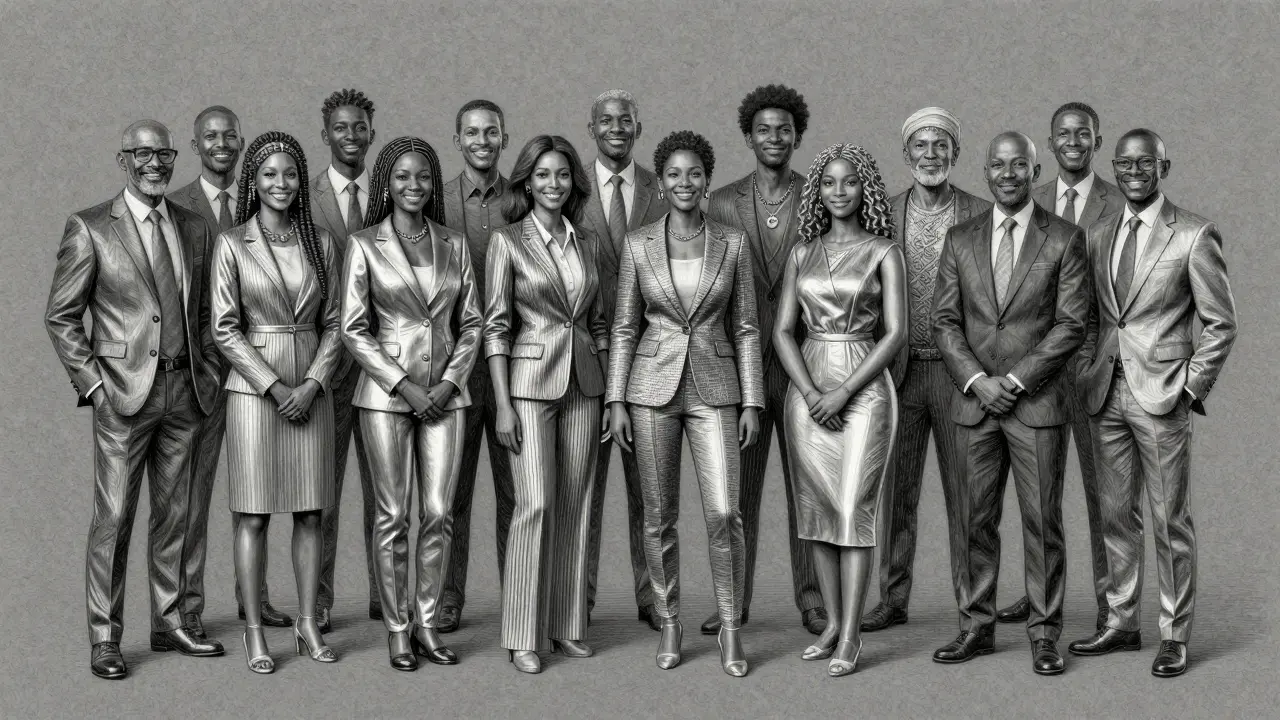

- Underrepresentation: This is the "invisibility" problem. Certain groups simply aren't there. For instance, you might find very few images of women in high-performing technical occupations, leading the AI to believe those roles are exclusively male.

- Misrepresentation: Here, the group is present, but the depiction is harmful. This happens when the AI relies on tropes or stereotypes-like depicting specific ethnic groups only in traditional clothing even when prompted for "modern" settings.

- Overrepresentation: This occurs when the AI defaults to a dominant group for everything, or conversely, over-associates a minority group with negative contexts, such as linking darker skin tones to poverty or crime.

We see this clearly in tools like Stable Diffusion, where empirical tests have shown a persistent lean toward these stereotypes, proving that the model's internal "worldview" is deeply flawed.

| Bias Type | Core Issue | Example Scenario | Resulting Output |

|---|---|---|---|

| Underrepresentation | Lack of data | "Female Software Engineer" | Rarely generates a woman |

| Misrepresentation | Stereotypical data | "Person from India" | Always shows traditional dress |

| Overrepresentation | Skewed dominance | "Professional person" | Almost always shows a white male |

Measuring the Damage: How We Detect Bias

You can't fix what you can't measure. In the past, researchers used a simple binary: was the bias intrinsic (inside the model) or extrinsic (how the user experienced it)? Today, we use a more sophisticated three-tier framework:

- Preuse Bias: Checking the training data before the model is even built.

- Intrinsic Bias: Analyzing the model's internal associations (e.g., how closely the word "doctor" is mapped to "man" in the vector space).

- Extrinsic Bias: Measuring the real-world impact of the outputs once the tool is in the wild.

To get a full picture, developers use Distributional Metrics, which track how often different demographics appear in results. But numbers aren't everything. Expert teams also use embedding-based similarity checks to see if the AI's "mathematical understanding" of a group is skewed. Combining these quantitative numbers with qualitative human reviews is the only way to catch subtle, contextual biases that a spreadsheet would miss.

Fighting Back: Strategies for Mitigation

Cleaning the data is the first step, but it's rarely enough because you can't simply "delete" the internet. Instead, researchers are turning to smarter data augmentation. One popular method is the Synthetic Minority Over-sampling Technique (SMOTE), which creates synthetic examples of underrepresented groups to balance the scales. By generating counterfactual data-examples that explicitly defy stereotypes-we can teach the AI that there are many ways a "leader" or a "caregiver" can look.

Beyond the data, we can change the actual architecture of the AI. Standard Generative Adversarial Networks (GANs) often suffer from "mode-collapse," where they get stuck repeating a few safe, common patterns. To solve this, the CA-GAN architecture was developed. By using stacked Bidirectional LSTMs (three layers instead of one), it can capture much more complex patterns and avoid the trap of outputting the same biased result over and over.

These technical shifts have real-world wins. For example, using CA-GANs in medical imaging AI has significantly improved fairness for Black and female patients, ensuring that diagnostic tools are just as accurate for them as they are for the dominant groups in the training set.

The Research Gap: LLMs vs. LMMs

There is a worrying trend in AI development: we've spent far more time fixing bias in Large Language Models (LLMs) than in multimodal ones. Because text is easier to audit than images, the community has focused on chatbots. However, LMMs are more dangerous because they combine modalities. A biased text prompt combined with a biased image generator creates a "compounding effect" that makes the output significantly more stereotypical.

The industry is only now beginning to develop "detoxification" methods, such as further pretraining on gender-neutral datasets and adjusting the decoding strategies the AI uses to pick the final output. The goal is to move toward a system where fairness is a core requirement of the architecture, not a patch added at the end.

Putting it All Together: A Path to Fairer AI

Fixing bias isn't a one-and-done task; it's a continuous cycle of monitoring and adjustment. To truly move the needle, organizations need to stop relying on single-metric evaluations. A tool that looks "fair" on a bar chart might still be producing harmful stereotypes in subtle, contextual ways.

The future of multimodal AI depends on our ability to curate datasets that actually reflect the global population, not just the most vocal parts of the internet. When we combine advanced architectures like CA-GANs with a commitment to diverse data, we stop the AI from being a mirror of our worst habits and start making it a tool for an equitable future.

What is the difference between bias and fairness in AI?

Fairness is about the outcome-whether the AI generates different demographic groups with equal probability. Bias is the systematic error in the process-a non-random deviation from the ground truth that causes the AI to favor one group over another based on skewed data.

Why are multimodal models more biased than text-only models?

Multimodal models combine different types of data, such as text and images. This allows them to create associations between modalities (e.g., linking a specific word to a specific visual stereotype), which can amplify biases that might be less obvious in a text-only environment.

How does SMOTE help reduce dataset bias?

SMOTE (Synthetic Minority Over-sampling Technique) creates new, synthetic data points for underrepresented groups. By filling in the gaps in the training data, it prevents the model from ignoring minority classes or treating them as anomalies.

What is "mode-collapse" in GANs and how does it relate to bias?

Mode-collapse happens when a generator finds a few patterns that "trick" the discriminator and simply repeats them. In terms of bias, this means the AI keeps producing the most common, stereotypical version of an image because it's the "safest" bet, ignoring the diversity present in the actual training data.

Can AI bias be completely removed?

Completely removing bias is nearly impossible because data is a reflection of human society, which is inherently biased. However, we can significantly mitigate it through curated datasets, architectural improvements, and continuous monitoring to ensure the AI doesn't amplify these biases.