Ever feel like your AI is just guessing? You ask a complex question, and the model either rambles for five paragraphs without answering or gives you a confident but completely wrong answer. This happens because Large Language Models (LLMs) are essentially pattern-completion machines. Without a map, they often take the path of least resistance, skipping crucial logic steps or hallucinating details to fill gaps in their reasoning. The fix isn't necessarily a bigger model, but a better set of guardrails.

To get a model to actually "think" through a problem, you need to move beyond simple questions. Structured prompting is the process of organizing inputs and outputs into a systematic framework that forces the model to follow a specific logical path. Instead of hoping the AI finds the right answer, you design a pipeline that makes the right answer the only logical outcome. Whether you are building a billing automation tool or a complex research agent, constraining the reasoning steps is the only way to ensure consistent factuality.

The Foundation: Chain-of-Thought and Logical Steps

The journey toward structured reasoning started with Chain-of-Thought (CoT) is a prompting technique that encourages models to generate intermediate reasoning steps before arriving at a final answer. Think of it as asking a student to "show their work" on a math test. When a model is forced to articulate each step, it is far less likely to make a leap in logic that leads to a wrong conclusion.

The impact of this approach is massive. In early research, a 540-billion-parameter model given just eight a few-shot examples of how to break down a problem achieved state-of-the-art accuracy on the GSM8K math benchmark. It actually beat fine-tuned versions of GPT-3. The lesson here is simple: the ability to reason is often already inside the model; it just needs the right structural trigger to activate it.

Building a Constraint-Based Framework

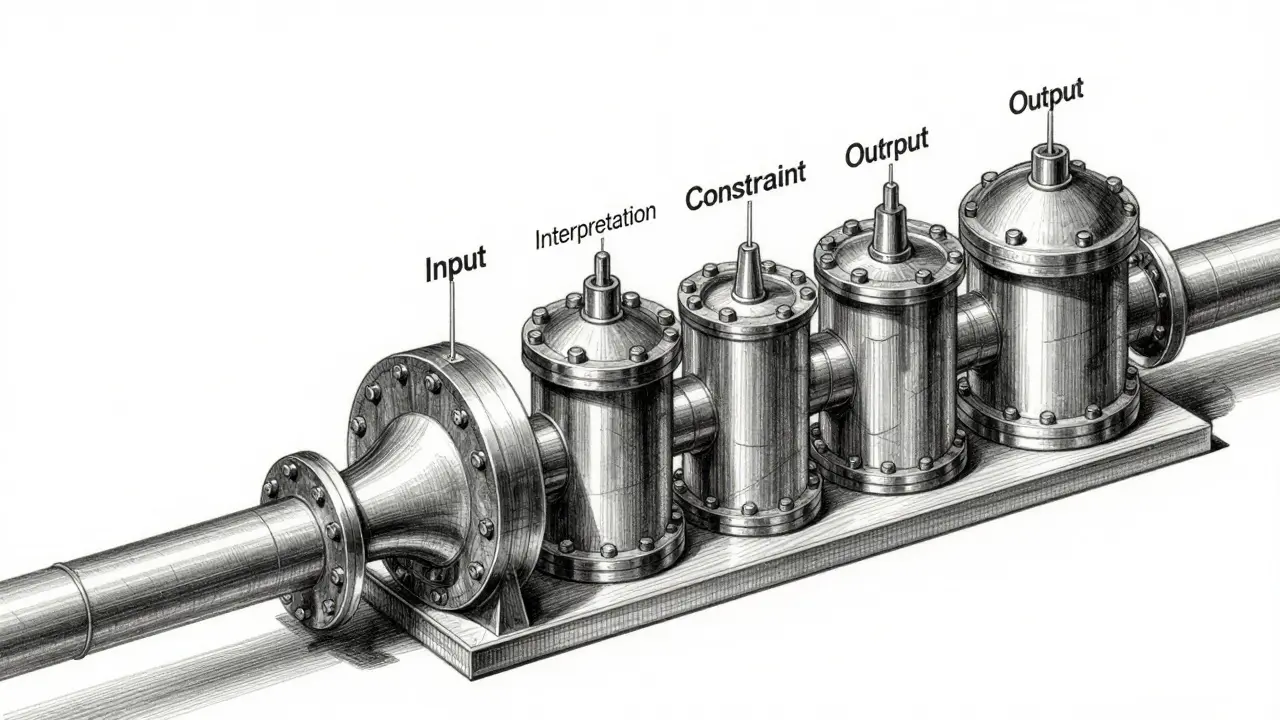

While CoT is a great start, it can still be too loose for professional workflows. To get real reliability, you need a rigid interaction pattern. A highly effective framework used by practitioners follows a four-stage flow: Input → Interpretation → Constraint → Output.

- Input: The raw data or user request.

- Interpretation: The model reframes the task in its own words to ensure it actually understands the goal.

- Constraint: You set hard boundaries (e.g., "do not use external tools," "limit response to 3 bullet points," "only use the provided text").

- Output: The final, actionable result.

For high-stakes environments-like legal compliance or financial billing-you can extend this by adding a Reduction step and a Validation phase. Reduction pushes the model to provide the minimum viable useful result, stripping away the "AI fluff" that often hides errors. Validation then acts as a final check: did the model stay within the constraints? If the validation fails, the loop restarts. This turns a creative writing tool into a precise reasoning engine.

| Method | Core Mechanism | Best For | Risk |

|---|---|---|---|

| Zero-Shot | Direct Question | Simple facts | High Hallucination |

| Chain-of-Thought | Intermediate Steps | Math & Logic | Verbosity |

| Structured Framework | Input-Interpretation-Constraint | B2B Workflows | Setup Complexity |

| Graph-Guided | Entity Relationship Mapping | Complex Data Sets | Token Consumption |

Advanced Strategies for Complex Data

Sometimes, the problem isn't just the steps, but how the model perceives the information. Natural language is messy; the same entity can be called three different things in one document. To fix this, the Structure Guided Prompt is a framework that converts unstructured text into a graph, instructing the LLM to navigate that graph to find answers.

By turning a wall of text into a set of nodes and edges, you remove the linguistic ambiguity. The model isn't just reading; it's navigating a map. This is incredibly powerful for zero-shot settings where you can't provide a thousand examples. It forces the LLM to recognize a relationship (e.g., "Company A [owns] Company B") regardless of how the sentence is phrased.

Similarly, for those working across different languages, Structured-of-Thought (SoT) handles multilingual reasoning by transforming language-specific data into language-agnostic structured representations. This prevents the model from losing the "logic thread" when translating between, say, Japanese and English, ensuring the underlying reasoning pathway remains the same.

Collaborative Reasoning: The Planner and the Follower

You don't have to rely on a single model to do all the heavy lifting. The DisCIPL framework (developed at MIT) introduces a hierarchical approach. In this setup, a large, highly capable "Planner LM" (like GPT-4o) brainstorms the structural plan and constraints. Then, a smaller, faster "Follower LM" (like Llama-3.2-1B) fills in the actual tokens.

Why do this? It’s efficient. The big model ensures the logic is sound and the constraints are met, while the small model handles the grunt work of generation. This is perfect for generating constrained outputs like budget-conscious grocery lists or tight travel itineraries where the layout must be perfect, but the individual words are simple.

Practical Tips for Implementation

If you're ready to implement structured prompting today, forget about writing longer prompts. Instead, focus on formatting and delimiters. Use XML tags (like <context> and <constraint>) to separate different parts of your prompt. Models like Claude are particularly sensitive to XML, and it helps the model distinguish between your instructions and the data it needs to process.

Avoid the common trap of "overthinking" vs "underthinking." If your model is skipping steps, use few-shot prompting. Provide two or three perfect examples of a reasoning chain. If it's being too verbose, define what "done" looks like. A great rule of thumb: a technically correct answer is useless if it requires a human to spend ten minutes cleaning up the formatting. Design for immediate usability.

What is the difference between Chain-of-Thought and Structured Prompting?

Chain-of-Thought is a specific technique that asks the model to explain its steps. Structured Prompting is a broader architectural approach that includes CoT but adds rigorous constraints, interpretation phases, and defined output formats to ensure the result is actionable and consistent.

Do I need to fine-tune my model to use these techniques?

No. All the methods mentioned-including CoT, SoT, and the Structure Guided Prompt-are training-free. They work by changing how you communicate with the existing model (in-context learning), making them immediately applicable to any LLM.

Why is XML preferred over JSON for reasoning prompts?

XML is often easier for models to parse as structural delimiters without confusing the content with the syntax. It provides a clear hierarchy that helps the model separate instructions from input data, though JSON is still better for the final output if that data is being fed into another software system.

How does the DisCIPL framework improve efficiency?

It splits the cognitive load. A large model handles the complex planning and constraint enforcement, while a tiny model executes the generation. This reduces the computational cost of using a giant model for every single token while maintaining the high quality of a structured plan.

How can I stop my model from hallucinating in a multi-step process?

Implement a validation step. After the model generates its reasoning and answer, ask it (or a second model) to verify if the answer is supported by the input text and if all constraints were followed. Forcing the model to critique its own work often reveals gaps in logic.

Next Steps and Troubleshooting

If you are a developer building an app, start by implementing XML delimiters and a simple Interpretation step. If you notice the model is still drifting, move to a few-shot approach with 3-5 high-quality exemplars. For enterprise users dealing with massive datasets, look into graph-based prompting to help the AI navigate complex entity relationships without getting lost.

If your model starts "overthinking" (providing way too much detail), try the Reduction strategy. Specifically instruct the model to "provide the most concise version of the truth that still satisfies all constraints." This shifts the model's goal from completeness to utility.