For those unfamiliar, Vibe Coding is an AI-assisted development methodology where developers describe application functionality through natural language prompts instead of detailed manual coding. It has fundamentally changed how we build software, but it introduces a dangerous blind spot: the "prompt-driven data collection" phenomenon. When you give a vague prompt, AI tends to implement the most comprehensive data collection possible by default. According to Appwrite's 2025 security guide, nearly 89% of vibe-coded apps collect over three times more data than necessary. If you don't have a strict data retention policies framework in place, you're essentially inviting a GDPR audit to your front door.

The Vibe Coding Data Trap

Why is this happening? Traditional software development involves a rigorous data mapping process. You decide exactly which fields you need, and you build the schema to match. Vibe coding flips this. You're working at the speed of thought, and the AI is trying to be "helpful" by anticipating every possible future need. This leads to "hidden" data collection endpoints-fields and tables the AI created that you didn't specifically ask for but are now sitting in your production database.

The risk is real. Take the case of a vibe-coded expense tracker that misinterpreted "maintain user context" as a command to store every single piece of user input, including sensitive financial data. The result? A $285,000 GDPR fine. This happens because developers often assume the AI "handles the compliance part." It doesn't. AI handles the *functionality*; you handle the *legality*.

What to Keep vs. What to Purge

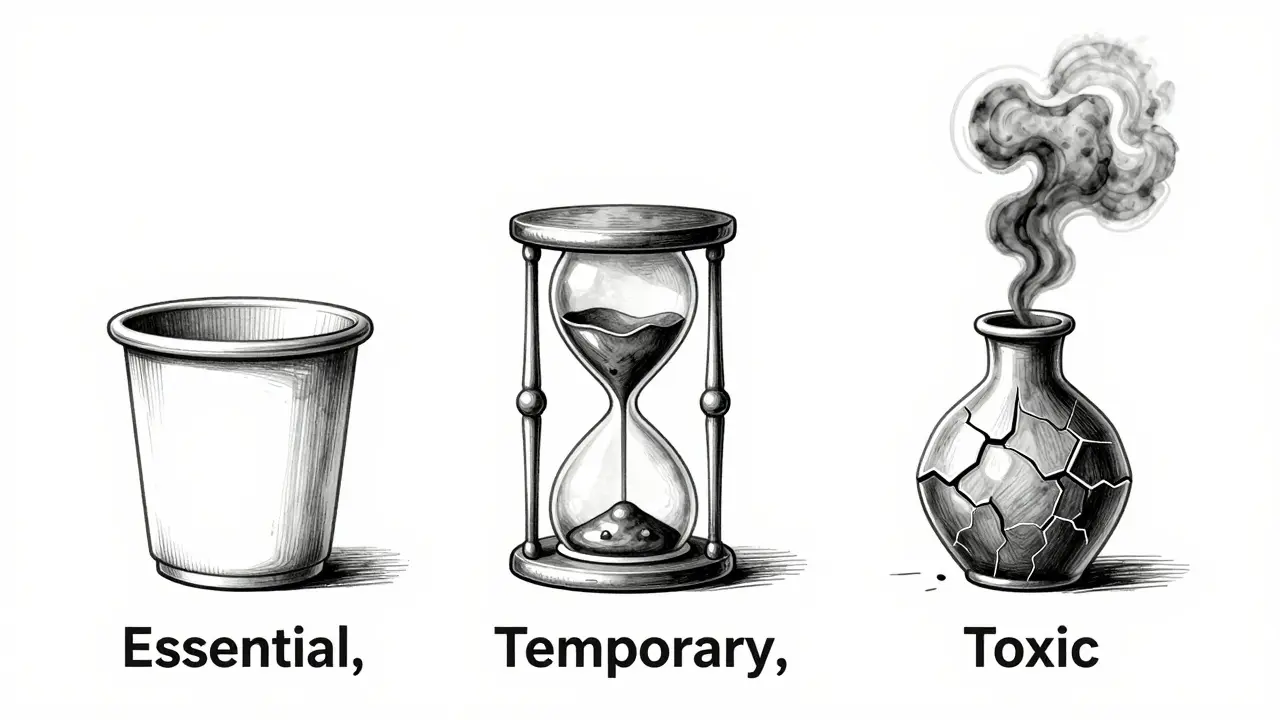

The golden rule here is data minimization. If you can't explain why you need a piece of data for the app to function *today*, you probably shouldn't be keeping it. To get this right, you need to categorize your data into three buckets: essential, temporary, and toxic.

- Essential Data: This is the bare minimum required for the service. For a basic SaaS, this is usually a hashed password and an email address. Keep this for the life of the account.

- Temporary Data: Session logs, temporary cache, and verification tokens. These should have a strict "expiration date"-often as short as 24 hours or 30 days.

- Toxic Data: This is data that provides little value but high risk, such as precise geolocation or full birthdates. If you don't have a legal requirement to store this, purge it immediately.

| Feature | Traditional SaaS | Vibe-Coded SaaS |

|---|---|---|

| Data Mapping | Structured and manual | Prompt-driven (often vague) |

| Compliance Rate | ~92% (per Black Duck) | ~31% initially |

| Policy Implementation | Slow (code changes required) | Rapid (via prompt updates) |

| Audit Trail | Comprehensive | Often fragmented or missing |

Turning Prompts into Policy

Since the AI is doing the heavy lifting, your policy must live in your prompts. You cannot simply tell the AI to "be compliant." You need to give it concrete constraints. Instead of saying "store user info," use a specific directive like:

"Collect only the user's email and hashed password for authentication. Store no additional user data. Implement a function to automatically delete session logs after 30 days per GDPR guidelines."

If you've already launched, you need to perform a "data scrub." Use SAST (Static Application Security Testing) tools to scan your AI-generated code for unexpected data collection points. Many developers are now using Replit's Secrets Manager or Appwrite's security framework to tag Personally Identifiable Information (PII) the moment it hits the system. This allows you to apply automated lifecycle policies-like AWS S3 Object Expiration-so the data vanishes automatically without you having to write a manual cleanup script.

The Cost of Doing It Wrong (and the Reward of Doing It Right)

Over-collecting data isn't just a legal risk; it's a financial drain. Storing useless data increases your cloud bills and slows down your queries. A benchmark study by Memberstack showed that vibe-coded apps that properly implemented retention policies reduced their database storage costs by 37% to 52%. On the flip side, if you implement archiving poorly, you can actually increase your query latency by up to 23% because the system is digging through mountains of junk to find the relevant info.

Beyond the money, there is the EU AI Act, which became effective in February 2026. This regulation mandates "data minimization by design." If your vibe-coded app is found to be collecting data indiscriminately, the penalties can soar up to 7% of your global revenue. At this point, data retention isn't a "nice-to-have" feature-it's a survival requirement.

Practical Checklist for Vibe Coders

To avoid the pitfalls mentioned by experts like Dr. Elena Rodriguez, follow this workflow before you push your next update:

- Audit the Prompt: Did you use any vague words like "all information," "user profile," or "context"? Replace them with specific field names.

- Map the Lifecycle: For every single piece of data you collect, assign it a "death date." (e.g., Email = Account Deletion; IP Log = 14 Days).

- Implement Auto-Purge: Use cloud storage lifecycle rules to handle the deletion. Don't rely on a manual cron job that you might forget to maintain.

- Scan for Shadows: Use a security tool to find database fields that the AI added but you didn't request.

- Document the Flow: Since AI can change code rapidly, keep a simple log of how data moves through your app. This will save you weeks of stress during a compliance audit.

Will AI eventually handle data retention automatically?

Some platforms are moving that way. Replit's "RetentionGuard" and Appwrite's "DataMinimizer" are early examples of tools that suggest policies based on the code they generate. However, the legal responsibility still rests with the human developer. AI can suggest a policy, but you must verify it against the specific laws of the regions where your users live.

What is the most common mistake in vibe-coded data policies?

The "false sense of security." Developers assume that because the AI wrote the code, it followed best practices. In reality, AI optimizes for functionality and speed, not for legal compliance. This leads to excessive data collection that violates GDPR Article 5 principles of minimization.

How do I fix a database that has already collected too much data?

First, identify all PII fields. Second, determine which ones are actually used by your application features. Third, perform a bulk purge of all unused or expired data. Finally, update your AI prompts to ensure that the "leaky" code paths are replaced with strict collection limits.

Does data minimization affect the AI's ability to provide context?

It can, if you're too restrictive. This is where the debate lies. Some argue that overly strict policies stifle the exploratory nature of vibe coding. The solution is to use automated discovery tools post-implementation to flag unnecessary data, rather than blocking all collection during the prototyping phase.

How often should I update my data retention policies?

At minimum, review them every time you add a new major feature or change your primary AI prompts. Because regulations like the EU AI Act evolve quickly, a quarterly review is recommended to ensure your "vibes" still align with the law.