Key Takeaways

- Vague prompts lead to hallucinations; structured patterns lead to passing tests.

- "Recipe" and "Context and Instruction" patterns are the most efficient for reducing AI back-and-forth.

- Defining pre-conditions and post-conditions is more effective than long Chain-of-Thought sequences.

- Security must be an explicit part of the prompt, not an afterthought.

The Core Problem: Why Your Prompts Fail

We've all been there: you ask an AI to write a unit test, and it generates a beautiful piece of code that doesn't actually test the edge cases, or worse, it hallucinates a library method that doesn't exist. This happens because of a gap in prompt design. Most developers rely on iterative, conversational prompting-sending five or six messages to "fix" the code. While this works, it's a massive time sink and increases the risk of introducing bugs.

Research using datasets like HumanEval+ and MBPP+ shows that the difference between a failing prompt and a passing one often comes down to a few specific constraints. Instead of asking the model to "be better," you need to provide a framework that restricts the model's creative freedom and forces it to adhere to technical specifications.

Patterns for Generating Bulletproof Unit Tests

Generating a unit test isn't just about calling a function and checking the result. To get tests that actually find bugs, you need to move beyond simple "Creation" prompts. A simple request like "write a test for this function" usually results in a "happy path" test that passes even if the code is broken.

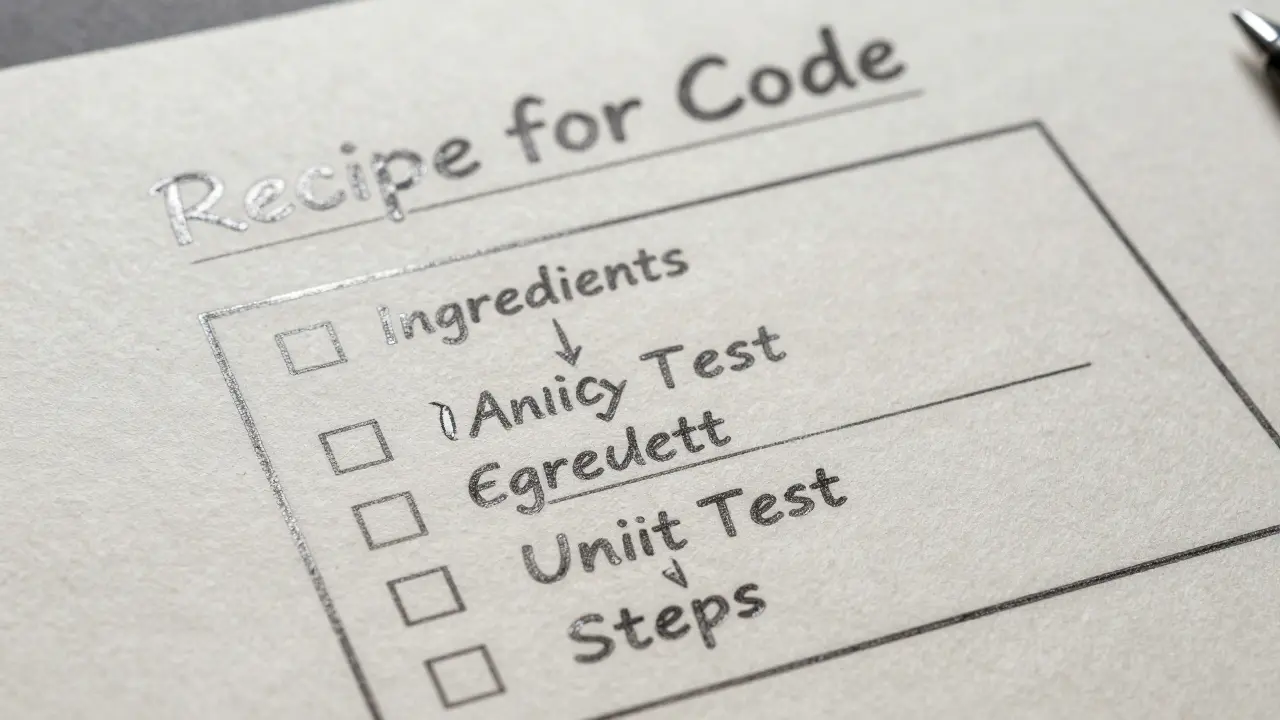

To fix this, apply the Recipe Pattern. Think of a recipe as a structured set of ingredients (inputs, mock data, dependencies) and step-by-step instructions (setup, action, assertion). Instead of a paragraph of text, give the LLM a checklist:

- Input Specifications: Define exactly what the input data looks like (e.g., "An array of integers where some may be negative").

- Pre-conditions: What must be true before the test runs? (e.g., "The database connection must be mocked").

- Post-conditions: What is the exact expected outcome? (e.g., "The function should throw a ValidationError if the input is null").

- Edge Case Requirements: Explicitly list the "weird" scenarios, such as empty strings, maximum integer values, or network timeouts.

By shifting from a conversational request to a structured recipe, you reduce the number of iterations needed to get a passing test suite. You aren't just asking for code; you're defining the boundary of correctness.

Refactoring Without Breaking Everything

Refactoring is where LLMs can be most dangerous. If you ask a model to "clean up this code," it might change a variable name but accidentally alter the logic, introducing a regression that doesn't surface until production. The goal of a refactor prompt is to maintain behavioral equivalence.

The most effective approach here is the Context and Instruction Pattern. You must provide the model with the full context of the surrounding architecture. If you're refactoring a method in a SaaS backend, the LLM needs to know if that method is called by a public API or an internal cron job.

When prompting for a refactor, use these specific constraints:

- The "No-Change" Rule: Explicitly tell the model: "Do not change the external API signature or the observable behavior of the function."

- Specific Goal: Instead of "make it better," use terms like "reduce cyclomatic complexity," "convert this nested loop into a map/filter chain," or "implement the Strategy Pattern to remove these if-else blocks."

- Implementation Details: Mention the version of the language you are using. For example, if you're using Python 3.12, tell the model to use the latest type-hinting syntax.

Comparing Prompting Strategies

Not all prompting techniques are created equal. Some are great for quick prototypes, while others are necessary for production-grade software engineering.

| Pattern | Best For | Pros | Cons |

|---|---|---|---|

| Creation/Generation | Boilerplate, simple utilities | Fast, low effort | High failure rate for complex logic |

| Recipe | Unit Tests, Edge Cases | High reliability, consistent outputs | Requires more upfront effort to write |

| Context & Instruction | Refactoring, Architecture changes | Prevents regressions, maintains style | Can hit token limits with large files |

| Problem-Solving | Debugging, Brainstorming | Collaborative, exploratory | Often requires many iterations |

The Security Angle: Prompting for Safe Code

A major pitfall in AI-assisted coding is the "security blind spot." LLMs are trained on vast amounts of code, including code that is insecure. If you don't explicitly prompt for security, the model might give you a working solution that is wide open to SQL Injection or Cross-Site Scripting (XSS).

To mitigate this, integrate security constraints directly into your prompt design. Instead of asking for a "database query function," ask for a "secure database query function that uses parameterized queries to prevent injection attacks." By adding the security requirement as a primary attribute of the task, you force the model to prioritize security-centric patterns over the simplest (and often most insecure) pattern it found in its training data.

Moving Beyond Chain-of-Thought

For a long time, the gold standard was Chain-of-Thought (CoT) prompting-telling the AI to "think step-by-step." While this is helpful for math problems, it's often inefficient for code. Long CoT sequences increase token costs, slow down inference, and can actually lead the model to hallucinate a complex solution when a simple one would suffice.

The shift is now toward single-shot optimized prompts. Instead of a long conversation, you create one highly detailed prompt that includes: the method signature, the docstring, the I/O specifications, and a concrete example of a passing test case. This approach focuses on providing the model with a high-density set of constraints, which results in a higher "first-pass" success rate.

Does the specific LLM model change which prompt patterns I should use?

Generally, no. While a model like Llama 3.3 70B might be more concise than GPT-4o, the underlying need for clear constraints, pre-conditions, and post-conditions remains the same. Structured patterns like the "Recipe" pattern work across most high-parameter models because they reduce ambiguity, which is the primary cause of failure regardless of the model's size.

How do I know if my prompt is truly "optimized"?

The gold standard for optimization is the test-passing rate. A prompt is considered optimized if it consistently produces code that passes its associated unit tests. A rigorous way to verify this is to run the same prompt ten times; if it fails more than once, you likely have an ambiguity in your specifications that needs to be tightened.

Can I use prompt patterns to automate the creation of documentation?

Yes. The "Explanation and Analysis" prompt pattern is specifically designed for this. By asking the AI to analyze the complexity and purpose of a block of code and then output it in a specific format (like JSDoc or Doxygen), you can generate documentation that is closely tied to the actual implementation.

Why is providing a concrete example better than a detailed description?

LLMs are pattern-completion engines. A detailed description tells the model what to do, but an example shows the model how to do it. Providing one or two examples of the expected input/output mapping (few-shot prompting) significantly reduces the chance that the model will misinterpret your terminology or formatting requirements.

Is it possible to over-prompt and confuse the model?

Yes, if you provide contradictory instructions or too many irrelevant constraints. The key is to be specific, not verbose. Focus on constraints that directly impact the output's correctness. If you provide 20 constraints but only 3 are relevant to the logic, the model may prioritize the wrong ones, leading to suboptimal code.

Next Steps for Your Workflow

If you're just starting to implement these patterns, don't try to overhaul every prompt at once. Start with your most fragile unit tests. Try replacing a vague request with a Recipe Pattern prompt and see if the first-pass success rate improves. Once you've mastered tests, move to refactoring by applying the Context and Instruction approach to a small utility class.

For those managing teams, create a shared "Prompt Library" where developers can store optimized prompts for common tasks. This prevents every team member from having to reinvent the wheel and ensures a consistent level of code quality across the project.